11+ creative writing guide with 50 example topics and prompts

by Hayley | Nov 17, 2022 | Exams , Writing | 0 comments

The 11+ exam is a school entrance exam taken in the academic year that a child in the UK turns eleven.

These exams are highly competitive, with multiple students battling for each school place awarded.

The 11 plus exam isn’t ‘one thing’, it varies in its structure and composition across the country. A creative writing task is included in nearly all of the 11 plus exams, and parents are often confused about what’s being tested.

Don’t be fooled into thinking that the plot of your child’s writing task is important. It is not.

The real aim of the 11+ creative writing task is to showcase your child’s writing skills and techniques.

And that’s why preparation is so important.

This guide begins by answering all the FAQs that parents have about the 11+ creative writing task.

At the end of the article I give my best tips & strategies for preparing your child for the 11+ creative writing task , along with 50 fiction and non-fiction creative writing prompts from past papers you can use to help your child prepare. You’ll also want to check out my 11+ reading list , because great readers turn into great writers.

Do all 11+ exams include a writing task?

Not every 11+ exam includes a short story component, but many do. Usually 3 to 5 different prompts are given for the child to choose between and they are not always ‘creative’ (fiction) pieces. One or more non-fiction options might be given for children who prefer writing non-fiction to fiction.

Timings and marking vary from test to test. For example, the Kent 11+ Test gives students 10 minutes for planning followed by 30 minutes for writing. The Medway 11+ Test gives 60 minutes for writing with ‘space allowed’ on the answer booklet for planning.

Tasks vary too. In the Kent Test a handful of stimuli are given, whereas 11+ students in Essex are asked to produce two individually set paragraphs. The Consortium of Selective Schools in Essex (CCSE) includes 2 creative writing paragraphs inside a 60-minute English exam.

Throughout the UK each 11+ exam has a different set of timings and papers based around the same themes. Before launching into any exam preparation it is essential to know the content and timing of your child’s particular writing task.

However varied and different these writing tasks might seem, there is one key element that binds them.

The mark scheme.

Although we can lean on previous examples to assess how likely a short story or a non-fiction tasks will be set, it would be naïve to rely completely on the content of past papers. Contemporary 11+ exams are designed to be ‘tutor-proof’ – meaning that the exam boards like to be unpredictable.

In my online writing club for kids , we teach a different task each week (following a spiral learning structure based on 10 set tasks). One task per week is perfected as the student moves through the programme of content, and one-to-one expert feedback ensures progression. This equips our writing club members to ‘write effectively for a range of purposes’ as stated in the English schools’ teacher assessment framework.

This approach ensures that students approaching a highly competitive entrance exam will be confident of the mark scheme (and able to meet its demands) for any task set.

Will my child have a choice of prompts to write from or do they have to respond to a single prompt, without a choice?

This varies. In the Kent Test there are usually 5 options given. The purpose is to gather a writing sample from each child in case of a headteacher appeal. A range of options should allow every child to showcase what they can do.

In Essex, two prescriptive paragraphs are set as part of an hour-long English paper that includes comprehension and vocabulary work. In Essex, there is no option to choose the subject matter.

The Medway Test just offers a single prompt for a whole hour of writing. Sometimes it is a creative piece. Recently it was a marketing leaflet.

The framework for teaching writing in English schools demands that in order to ‘exceed expectations’ or better, achieve ‘greater depth’, students need to be confident writing for a multitude of different purposes.

In what circumstances is a child’s creative writing task assessed?

In Essex (east of the UK) the two prescriptive writing tasks are found inside the English exam paper. They are integral to the exam and are assessed as part of this.

In Medway (east Kent in the South East) the writing task is marked and given a raw score. This is then adjusted for age and double counted. Thus, the paper is crucial to a pass.

In the west of the county of Kent there is a different system. The Kent Test has a writing task that is only marked in appeal cases. If a child dips below the passmark their school is allowed to put together a ‘headteacher’s appeal’. At this point – before the score is communicated to the parent (and probably under cover of darkness) the writing sample is pulled out of a drawer and assessed.

I’ve been running 11+ tutor clubs for years. Usually about 1% of my students passed at headteacher’s appeal.

Since starting the writing club, however, the number of students passing at appeal has gone up considerably. In recent years it’s been more like 5% of students passing on the strength of their writing sample.

What are the examiners looking for when they’re marking a student’s creative writing?

In England, the government has set out a framework for marking creative writing. There are specific ‘pupil can’ statements to assess whether a student is ‘working towards the expected standard,’ ‘working at the expected standard’ or ‘working at greater depth’.

Members of the headteacher panel assessing the writing task are given a considerable number of samples to assess at one time. These expert teachers have a clear understanding of the framework for marking, but will not be considering or discussing every detail of the writing sample as you might expect.

Schools are provided with a report after the samples have been assessed. This is very brief indeed. Often it will simply say ‘lack of precise vocabulary’ or ‘confused paragraphing.’

So there is no mark scheme as such. They won’t be totting up your child’s score to see if they have reached a given target. They are on the panel because of their experience, and they have a short time to make an instant judgement.

Does handwriting matter?

Handwriting is assessed in primary schools. Thus it is an element of the assessment framework the panel uses as a basis for their decision.

If the exam is very soon, then don’t worry if your child is not producing immaculate, cursive handwriting. The focus should simply be on making it well-formed and legible. Every element of the assessment framework does not need to be met and legible writing will allow the panel to read the content with ease.

Improve presentation quickly by offering a smooth rollerball pen instead of a pencil. Focus on fixing individual letters and praising your child for any hint of effort. The two samples below are from the same boy a few months apart. Small changes have transformed the look and feel:

Sample 1: First piece of work when joining the writing club

Sample 2: This is the same boy’s improved presentation and content

How long should the short story be.

First, it is not a short story as such—it is a writing sample. Your child needs to showcase their skills but there are no extra marks for finishing (or marks deducted for a half-finished piece).

For a half hour task, you should prepare your child to produce up to 4 paragraphs of beautifully crafted work. Correct spelling and proper English grammar is just the beginning. Each paragraph should have a different purpose to showcase the breadth and depth of their ability. A longer – 60 minute – task might have 5 paragraphs but rushing is to be discouraged. Considered and interesting paragraphs are so valuable, a shorter piece would be scored more highly than a rushed and dull longer piece.

I speak from experience. A while ago now I was a marker for Key Stage 2 English SATs Papers (taken in Year 6 at 11 years old). Hundreds of scripts were deposited on my doorstep each morning by DHL. There was so much work for me to get through that I came to dread long, rambling creative pieces. Some children can write pages and pages of repetitive nothingness. Ever since then, I have looked for crafted quality and am wary of children judging their own success by the number of lines competed.

Take a look at the piece of writing below. It’s an excellent example of a well-crafted piece.

Each paragraph is short, but the writer is skilful.

He used rich and precisely chosen vocabulary, he’s broken the text into natural paragraphs, and in the second paragraph he is beginning to vary his sentence openings. There is a sense of control to the sentences – the sentence structure varies with shorter and longer examples to manage tension. It is exciting to read, with a clear awareness of his audience. Punctuation is accurate and appropriate.

11+ creative writing example story

How important is it to revise for a creative writing task.

It is important.

Every student should go into their 11+ writing task with a clear paragraph plan secured. As each paragraph has a separate purpose – to showcase a specific skill – the plan should reflect this. Built into the plan is a means of flexing it, to alter the order of the paragraphs if the task demands it. There’s no point having a Beginning – Middle – End approach, as there’s nothing useful there to guide the student to the mark scheme.

Beyond this, my own students have created 3 – 5 stories that fit the same tight plan. However, the setting, mood and action are all completely different. This way a bank of rich vocabulary has already been explored and a technique or two of their own that fits the piece beautifully. These can be drawn upon on the day to boost confidence and give a greater sense of depth and consideration to their timed sample.

Preparation, rather than revision in its classic form, is the best approach. Over time, even weeks or months before the exam itself, contrasting stories are written, improved upon, typed up and then tweaked further as better ideas come to mind. Each of these meets the demands of the mark scheme (paragraphing, varied sentence openings, rich vocabulary choices, considered imagery, punctuation to enhance meaning, development of mood etc).

To ensure your child can write confidently at and above the level expected of them, drop them into my weekly weekly online writing club for the 11+ age group . The club marking will transform their writing, and quickly.

What is the relationship between the English paper and the creative writing task?

Writing is usually marked separately from any comprehension or grammar exercises in your child’s particular 11+ exam. Each exam board (by area/school) adapts the arrangement to suit their needs. Some have a separate writing test, others build it in as an element of their English paper (usually alongside a comprehension, punctuation and spelling exercise).

Although there is no creative writing task in the ISEB Common Pre-test, those who are not offered an immediate place at their chosen English public school are often invited back to complete a writing task at a later date. Our ISEB Common Pre-test students join the writing club in the months before the exam, first to tidy up the detail and second to extend the content.

What if my child has a specific learning difficulty (dyslexia, ADD/ADHD, ASD)?

Most exam boards pride themselves on their inclusivity. They will expect you to have a formal report from a qualified professional at the point of registration for the test. This needs to be in place and the recommendations will be considered by a panel. If your child needs extra arrangements on the day they may be offered (it isn’t always the case). More importantly, if they drop below a pass on one or more papers you will have a strong case for appeal.

Children with a specific learning difficulty often struggle with low confidence in their work and low self-esteem. The preparations set out above, and a kids writing club membership will allow them to go into the exam feeling positive and empowered. If they don’t achieve a pass at first, the writing sample will add weight to their appeal.

Tips and strategies for writing a high-scoring creative writing paper

- Read widely for pleasure. Read aloud to your child if they are reluctant.

- Create a strong paragraph plan where each paragraph has a distinct purpose.

- Using the list of example questions below, discuss how each could be written in the form of your paragraph plan.

- Write 3-5 stories with contrasting settings and action – each one must follow your paragraph plan. Try to include examples of literary devices and figurative language (metaphor, simile) but avoid clichés.

- Tidy up your presentation. Write with a good rollerball pen on A4 lined paper with a printed margin. Cross out with a single horizontal line and banish doodling or scribbles.

- Join the writing club for a 20-minute Zoom task per week with no finishing off or homework. An expert English teacher will mark the work personally on video every Friday and your child’s writing will be quickly transformed.

Pressed for time? Here’s a paragraph plan to follow.

At Griffin Teaching we have an online writing club for students preparing for the 11 plus creative writing task . We’ve seen first-hand what a difference just one or two months of weekly practice can make.

That said, we know that a lot of people reading this page are up against a hard deadline with an 11+ exam date fast approaching.

If that’s you (or your child), what you need is a paragraph plan.

Here’s one tried-and-true paragraph plan that we teach in our clubs. Use this as you work your way through some of the example prompts below.

11+ creative writing paragraph plan

Paragraph 1—description.

Imagine standing in the location and describe what is above the main character, what is below their feet, what is to their left and right, and what is in the distance. Try to integrate frontend adverbials into this paragraph (frontend adverbials are words or phrases used at the beginning of a sentence to describe what follows—e.g. When the fog lifted, he saw… )

Paragraph 2—Conversation

Create two characters who have different roles (e.g. site manager and student, dog walker and lost man) and write a short dialogue between them. Use what we call the “sandwich layout,” where the first person says something and you describe what they are doing while they are saying it. Add in further descriptions (perhaps of the person’s clothing or expression) before starting a new line where the second character gives a simple answer and you provide details about what the second character is doing as they speak.

Paragraph 3—Change the mood

Write three to four sentences that change the mood of the writing sample from light to gloomy or foreboding. You could write about a change in the weather or a change in the lighting of the scene. Another approach is to mention how a character reacts to the change in mood, for example by pulling their coat collar up to their ears.

Paragraph 4—Shock your reader

A classic approach is to have your character die unexpectedly in the final sentence. Or maybe the ceiling falls?

11+ creative writing questions from real papers—fictional prompts

- The day the storm came

- The day the weather changed

- The snowstorm

- The rainy day

- A sunny day out

- A foggy (or misty) day

- A day trip to remember

- The first day

- The day everything changed

- The mountain

- The hillside

- The old house

- The balloon

- The old man

- The accident

- The unfamiliar sound

- A weekend away

- Moving house

- A family celebration

- An event you remember from when you were young

- An animal attack

- The school playground at night

- The lift pinged and the door opened. I could not believe what was inside…

- “Run!” he shouted as he thundered across the sand…

- It was getting late as I dug in my pocket for the key to the door. “Hurry up!” she shouted from inside.

- I know our back garden very well, but I was surprised how different it looked at midnight…

- The red button on the wall has a sign on it saying, ‘DO NOT TOUCH.’ My little sister leant forward and hit it hard with her hand. What happened next?

- Digging down into the soft earth, the spade hit something metal…

- Write a story which features the stopping of time.

- Write a story which features an unusual method of transport.

- The cry in the woods

- Write a story which features an escape

11+ creative writing questions from real papers—non-fiction prompts

- Write a thank you letter for a present you didn’t want.

- You are about to interview someone for a job. Write a list of questions you would like to ask the applicant.

- Write a letter to complain about the uniform at your school.

- Write a leaflet to advertise your home town.

- Write a thank you letter for a holiday you didn’t enjoy.

- Write a letter of complaint to the vet after an unfortunate incident in the waiting room.

- Write a set of instructions explaining how to make toast.

- Describe the room you are in.

- Describe a person who is important to you.

- Describe your pet or an animal you know well.

AQA GCSE English Language Paper 1 Question 5

Here’s a descriptive writing example answer that I completed in timed conditions for AQA English Language Paper 1, Question 5. This question is worth HALF of your marks for the entire paper, so getting it right is crucial to receiving a high grade overall for your English GCSE. Underneath the answer, I’ll provide some feedback and analysis on why this piece would receive a top mark grade (around 38–40/40).

For further help, here’s a Link to the exam paper (AQA English Language Paper 1, Question 5)

Thanks so much for reading! If you find this resource helpful, take a look at our full GCSE English courses here:

The Ultimate AQA GCSE English Course Paper 1

The Ultimate AQA GCSE English Course Paper 2

Basic Descriptive Writing

“There’s an old house at the bottom of our road, so overgrown by giant twisted willow trees that you’d almost not realise it’s there if you passed. A grand old house, it must have once been owned by rich aristocrats; if you stare at it long enough you can just about imagine how they would have been a hundred years ago — swanning around in floaty silk dresses and smart wool suits, lounging on the swing in the veranda, sipping champagne and listening to jazz music well into the small hours of the morning.

But now, that swing is a rotten, splintered board barely held by frayed old ropes; it squeaks loudly as it sways in the breeze. The surrounding yard is replete with piles of rotten leaves and tall wisps of uncut grass. The whole house is crooked. It looks as if it’s sinking. The roof sags and dips inwards, like it can’t cope with life anymore and it just wants to crumble back into dust. On the exterior, the paint has almost all flaked off, giving a pixelated effect to the house: a glitch in a video game, it doesn’t belong in this world. The windows are opalescent from dust, and occasionally a pallid glow emanates from one of the larger windows on the bottom floor, followed by the hunched, aged silhouette of a man: Mr Grimshaw.

Mr Grimshaw’s the reason we go there, really. I don’t know what it is exactly, but he’s just fascinating to watch.

We don’t even know if Grimshaw’s his real name; that’s just what everyone around here calls him. A few of us dare each other to climb over the iron gates and sneak about the yard, getting as close to the house as we can without being seen. It’s a kind of ‘Grandpa’s footsteps’, I suppose. The furthest any of us ever make it is climbing up into the curled branches of the willows, which stop about halfway into the yard from the fence.

We sneak up into the willows and watch Mr Grimshaw most weekends (there’s not much else to do in our town). It’s like a doll’s house, but a living, breathing one. And much creepier, too, especially because half of the windows are a blur. You can just about make out the old furniture and faded decor in the rooms, once meticulously decorated yet now fallen into disrepair. He’s always moving between them, like a theatre set — he shuffles about in a frayed paisley smoking jacket — which I’m sure he must have stolen from one of the ornate armoires in the upstairs bedrooms.

Mostly, to amuse ourselves we usually compete by making derogatory comments and sly, ironic witticisms on Grimshaw’s every hunched and creaky shuffle: “What a WEIRDO!”, “Oh he’s back in the attic again, fourth time today” “Doesn’t he ever sleep? He’s the undead, I swear!”, that sort of thing. We often make up stories about him: he’s an old wizard, muttering spells and curses under his breath at anyone who dares cross into his territory. He’s a ghost doomed to wander the ramshackle halls of his former estate for eternity, and only those pure of heart can see or speak to him. He’s a hobo who got lucky and, finding the place abandoned, set up a little nest for himself there.

But today feels different, somehow. Today, we’re silent. The willows rustle; we listen. With a slow creak that’s straight out of a horror film, the gnarled front door swings open, and we get a close up of Mr Grimshaw for the very first time. He looks taller now, less crippled yet still leaning slightly onto his black walking stick, his gnarled and veiny hand resting on its ivory carved top. His eyes are bright blue and shimmering, like a glacier, and they’re open very wide, so that you can see the whites of his eyeballs. Hobbling in a firm, resolute manner, he starts off down the steps of the veranda, roughly following the worn, leaf littered path up to his letter box. By the time he gets there’s he’s panting heavily, we can hear him rasping even over the whispering trees.

He opens the box with a key and it springs apart with a neat ‘click’. There’s nothing inside. He’s still for a moment, then he collapses to the ground, wheezing and coughing. We watch him scrunch his face into an even wrinklier ball than usual, and with a grunt try to push himself up on his stick. Defeated, he falls back to the floor with a slump.

We’re speechless. In all our hours of watching Mr Grimshaw, we’ve never seen him like this. I’m not sure who makes the first move, but soon we’re all sliding down the tree trunk and rushing over to help him. Between the three of us, we manage to lift him up and get him on his feet. His arms seem so frail, and he’s as light as the breeze itself.

“Thank you for your assistance, kind gentlemen”, he says, still panting slightly. “Would you care to pop in for a spot of tea? It’s been so long since I’ve had any company.”

Silently, we nod and the four of us walk into his house together.”

MARKING AND FEEDBACK

There are a few reasons why this piece would receive a high grade, I’ll give you a breakdown of the main techniques that were used below:

- 5 types of imagery — visual, auditory, olfactory, gustatory, tactile

- A range of poetic devices — simile, metaphor, repetition, alliteration, symbolism, motif, specific and unusual vocabulary choices, extended descriptions and more

- A control over structural devices — range of punctuation, mixture of prose and dialogue, clear pacing (short and long sentences), range of paragraph lengths, capitalised words

- Developed control over tone (a shift in tone as the piece develops), style, setting and characterisation

- A clear shape to the description, including shifts of focus, without the piece feeling like a full story or narrative

- A sense of deeper themes and ideas, as well as a clear thematic statement — don’t judge others or mock them if you don’t know them well, they may need your help instead

Check related articles on the links below:

How to get top marks in English Language Paper 1, Section A

AQA GCSE English Language Paper 1, Question 4

Thanks for reading! If you’re looking for more help with AQA Language Paper 1, you can see our full course here .

This online course will give you a question by question breakdown of the exam, plus high level example answers .

Enroll today for access to comprehensive PDF study guides that will help you to improve your grades.

You will receive:, – paper 1 overview, – section by section guides, – example answers.

Until September 30th, the course is available at a 25% discount , just use the code ‘ PAPER1′ at checkout!

Buy the complete course now!

Related posts.

The Theme of Morality in To Kill A Mockingbird

Unseen Poetry Exam Practice – Spring

To Kill A Mockingbird Essay Writing – PEE Breakdown

Unseen Poetry Exam Practice: The Man He Killed

An Inspector Calls – Official AQA Exam Questions

How to Get Started with Narrative Writing

What do I need to do for AQA Language Paper 2?

How to do well in the AQA GCSE Paper 2 Exam!

How to Write a Perfect Essay on The Crucible by Arthur Miller

AQA Power and Conflict: Example A* / L9 Grade Paragraph

© Copyright Scrbbly 2022

- International

- Schools directory

- Resources Jobs Schools directory News Search

AQA GCSE English Language Paper 1 Question 5 Model creative responses

Subject: English

Age range: 14-16

Resource type: Lesson (complete)

Last updated

22 February 2018

- Share through email

- Share through twitter

- Share through linkedin

- Share through facebook

- Share through pinterest

Tes paid licence How can I reuse this?

Your rating is required to reflect your happiness.

It's good to leave some feedback.

Something went wrong, please try again later.

abbas_g2017

Could I ask why you’ve rated it so low? I’m more than happy to listen to suggestions and make improvements. <br /> <br /> Thanks.

Empty reply does not make any sense for the end user

Report this resource to let us know if it violates our terms and conditions. Our customer service team will review your report and will be in touch.

Not quite what you were looking for? Search by keyword to find the right resource:

Jump to navigation

- Inside Writing

- Teacher's Guides

Student Models

- Writing Topics

- Minilessons

- Shopping Cart

- Inside Grammar

- Grammar Adventures

- CCSS Correlations

- Infographics

Student Writing Models

How do I use student models in my classroom?

When you need an example written by a student, check out our vast collection of free student models. Scroll through the list, or search for a mode of writing such as “explanatory” or “persuasive.”

Jump to . . .

Explanatory writing.

- How Much I Know About Space Explanatory Paragraph

- My Favorite Pet Explanatory Paragraph

- Sweet Spring Explanatory Paragraph

Narrative Writing

- A Happy Day Narrative Paragraph

- My Trip to Mexico Narrative Paragraph

Creative Writing

- Happy Easter Story Paragraph

- Leaf Person Story

Research Writing

- Parrots Report

- If I Were President Explanatory Paragraph

- My Dad Personal Narrative

- The Horrible Day Personal Narrative

Response to Literature

- One Great Book Book Review

- A Fable Story

- Ant Poem Poem

- The Missing Coin Story

- Winter Words Poem

- Horses Report

- Ladybugs Report

- How to Make Boiled Eggs How-To

Persuasive Writing

- Plastic, Paper, or Cloth? Persuasive Paragraph

- The Funny Dance Personal Narrative

- The Sled Run Personal Narrative

- Hello, Spring! Poem

- Cheetahs Report

Business Writing

- Dear Ms. Nathan Email

- My Favorite Place to Go Description

- My Mother Personal Essay

- Rules Personal Essay

- Shadow Fort Description

- Adopting a Pet from the Pound Editorial

- Letter to the Editor Letter to the Editor

- Ann Personal Narrative

- Grandpa, Chaz, and Me Personal Narrative

- Indy’s Life Story Personal Narrative

- Jet Bikes Personal Narrative

- The Day I Took the Spotlight Personal Narrative

- A Story of Survival Book Review

- Chloe’s Day Story

- Did You Ever Look At . . . Poem

- Dreams Poem

- I Am Attean Poem

- Sloppy Joes Poem

- The Civil War Poem

- The Haunted House Story

- The Terror of Kansas Story

- When I Was Upside Down Poem

- Deer Don’t Need to Flee to Stay Trouble-Free! Report

- Height-Challenged German Shepherd Report

- Friendship Definition

- What Really Matters News Feature

- Cheating in America Problem-Solution

- Hang Up and Drive Editorial

- Musical Arts Editorial

- Summer: 15 Days or 2 1/2 Months? Editorial

- A Cowboy's Journal Fictionalized Journal Entry

- Giving Life Personal Narrative

- The Great Paw Paw Personal Narrative

- The Racist Warehouse Personal Narrative

- Limadastrin Poem

- The Best Little Girl in the World Book Review

- How the Stars Came to Be Story

- Linden’s Library Story

- My Backyard Poem

- The Call Poem

- I Am Latvia Research Report

- Mir Pushed the Frontier of Space Research Report

- The Aloha State Research Report

- The Incredible Egg Observation Report

- Unique Wolves Research Report

- Dear Dr. Larson Email

Personal Writing

- A Lesson to Learn Journal

- Caught in the Net Definition

- From Bed Bound to Breaking Boards News Feature

- If Only They Knew Comparison-Contrast

- Save the Elephants Cause-Effect

- Student Entrepreneur Reaches for Dreams of the Sky News Feature

- Internet Plagiarism Problem-Solution

- Mosquito Madness Pet Peeve

- Anticipating the Dream Personal Narrative

- Huddling Together Personal Narrative

- H’s Hickory Chips Personal Narrative

- It’s a Boy! Personal Narrative

- My Greatest Instrument Personal Narrative

- Snapshots Personal Narrative

- Take Me to Casablanca Personal Narrative

- The Boy with Chris Pine Blue Eyes Personal Narrative

- The Climb Personal Narrative

- The House on Medford Avenue Personal Narrative

- Adam’s Train of Ghosts Music Review

- Diary of Gaspard Fictionalized Journal Entry

- My Interpretation of The Joy Luck Club Literary Analysis

- Mama’s Stitches Poem

- The KHS Press Play

- Rosa Parks Research Report

- The Killer Bean Research Report

- Mid-Project Report on History Paper Email

- Vegetarian Lunch Options at Bay High Email

- Share full article

Advertisement

Supported by

Picture Prompts

125 Picture Prompts for Creative and Narrative Writing

What story can these images tell?

By The Learning Network

For eight years, we at The Learning Network have been publishing short, accessible, image-driven prompts that invite students to do a variety of kinds of writing via our Picture Prompts column.

Each week, at least one of those prompts asks students: Use your imagination to write the opening of a short story or poem inspired by this image — or, tell us about a memory from your own life that it makes you think of.

Now we’re rounding up years of these storytelling prompts all in one place. Below you’ll find 125 photos, illustrations and GIFs from across The New York Times that you can use for both creative and personal writing. We have organized them by genre, but many overlap and intersect, so know that you can use them in any way you like.

Choose an image, write a story, and then follow the link in the caption to the original prompt to post your response or read what other students had to say. Many are still open for comment for teenagers 13 and up. And each links to a free Times article too.

We can’t wait to read the tales you spin! Don’t forget that you can respond to all of our Picture Prompts, as they publish, here .

Images by Category

Everyday life, mystery & suspense, relationships, science fiction, travel & adventure, unusual & unexpected, cat in a chair, happy puppy, resourceful raccoon, cows and cellos, people and penguins, opossum among shoes, on the subway, sunset by the water, endless conversation, falling into a hole, lounging around, sneaker collection, the concert, meadow in starlight.

Related Picture Prompt | Related Article

Public Selfies

Night circus, tarot cards, castle on a hill, security line, batman on a couch, reaching through the wall, beware of zombies, haunted house, familial frights, witches on the water, blindfolded, phone booth in the wilderness, shadow in the sky, a letter in the mail, hidden doorway.

Point of No Return

Darkened library, under the table, playing dominoes, looking back, a wave goodbye, out at dusk, conversation, walking away, alone and together, a new friend, heated conversation, up in a tree, hole in the ceiling, under the desk, at their computers, marching band, band practice, in the hallway, in the lunchroom, the red planet, tech gadgets, trapped inside, astronaut and spider, computer screen, special key, tethered in space, on the court, in the waves, city skateboarding.

Fishing in a Stream

Over the falls.

Under the Sea

Sledding in the mountains, cracked mirror, wilderness wayfaring, car and cactus, walking through town, tropical confinement, travel travails, roller coasters, atop the hill, climbing a ladder, under the ice, other selves.

Students 13 and older in the United States and Britain, and 16 and older elsewhere, are invited to comment. All comments are moderated by the Learning Network staff, but please keep in mind that once your comment is accepted, it will be made public and may appear in print.

Find more Picture Prompts here.

Our approach

- Responsibility

- Infrastructure

- Try Meta AI

RECOMMENDED READS

- 5 Steps to Getting Started with Llama 2

- The Llama Ecosystem: Past, Present, and Future

- Introducing Code Llama, a state-of-the-art large language model for coding

- Meta and Microsoft Introduce the Next Generation of Llama

- Today, we’re introducing Meta Llama 3, the next generation of our state-of-the-art open source large language model.

- Llama 3 models will soon be available on AWS, Databricks, Google Cloud, Hugging Face, Kaggle, IBM WatsonX, Microsoft Azure, NVIDIA NIM, and Snowflake, and with support from hardware platforms offered by AMD, AWS, Dell, Intel, NVIDIA, and Qualcomm.

- We’re dedicated to developing Llama 3 in a responsible way, and we’re offering various resources to help others use it responsibly as well. This includes introducing new trust and safety tools with Llama Guard 2, Code Shield, and CyberSec Eval 2.

- In the coming months, we expect to introduce new capabilities, longer context windows, additional model sizes, and enhanced performance, and we’ll share the Llama 3 research paper.

- Meta AI, built with Llama 3 technology, is now one of the world’s leading AI assistants that can boost your intelligence and lighten your load—helping you learn, get things done, create content, and connect to make the most out of every moment. You can try Meta AI here .

Today, we’re excited to share the first two models of the next generation of Llama, Meta Llama 3, available for broad use. This release features pretrained and instruction-fine-tuned language models with 8B and 70B parameters that can support a broad range of use cases. This next generation of Llama demonstrates state-of-the-art performance on a wide range of industry benchmarks and offers new capabilities, including improved reasoning. We believe these are the best open source models of their class, period. In support of our longstanding open approach, we’re putting Llama 3 in the hands of the community. We want to kickstart the next wave of innovation in AI across the stack—from applications to developer tools to evals to inference optimizations and more. We can’t wait to see what you build and look forward to your feedback.

Our goals for Llama 3

With Llama 3, we set out to build the best open models that are on par with the best proprietary models available today. We wanted to address developer feedback to increase the overall helpfulness of Llama 3 and are doing so while continuing to play a leading role on responsible use and deployment of LLMs. We are embracing the open source ethos of releasing early and often to enable the community to get access to these models while they are still in development. The text-based models we are releasing today are the first in the Llama 3 collection of models. Our goal in the near future is to make Llama 3 multilingual and multimodal, have longer context, and continue to improve overall performance across core LLM capabilities such as reasoning and coding.

State-of-the-art performance

Our new 8B and 70B parameter Llama 3 models are a major leap over Llama 2 and establish a new state-of-the-art for LLM models at those scales. Thanks to improvements in pretraining and post-training, our pretrained and instruction-fine-tuned models are the best models existing today at the 8B and 70B parameter scale. Improvements in our post-training procedures substantially reduced false refusal rates, improved alignment, and increased diversity in model responses. We also saw greatly improved capabilities like reasoning, code generation, and instruction following making Llama 3 more steerable.

*Please see evaluation details for setting and parameters with which these evaluations are calculated.

In the development of Llama 3, we looked at model performance on standard benchmarks and also sought to optimize for performance for real-world scenarios. To this end, we developed a new high-quality human evaluation set. This evaluation set contains 1,800 prompts that cover 12 key use cases: asking for advice, brainstorming, classification, closed question answering, coding, creative writing, extraction, inhabiting a character/persona, open question answering, reasoning, rewriting, and summarization. To prevent accidental overfitting of our models on this evaluation set, even our own modeling teams do not have access to it. The chart below shows aggregated results of our human evaluations across of these categories and prompts against Claude Sonnet, Mistral Medium, and GPT-3.5.

Preference rankings by human annotators based on this evaluation set highlight the strong performance of our 70B instruction-following model compared to competing models of comparable size in real-world scenarios.

Our pretrained model also establishes a new state-of-the-art for LLM models at those scales.

To develop a great language model, we believe it’s important to innovate, scale, and optimize for simplicity. We adopted this design philosophy throughout the Llama 3 project with a focus on four key ingredients: the model architecture, the pretraining data, scaling up pretraining, and instruction fine-tuning.

Model architecture

In line with our design philosophy, we opted for a relatively standard decoder-only transformer architecture in Llama 3. Compared to Llama 2, we made several key improvements. Llama 3 uses a tokenizer with a vocabulary of 128K tokens that encodes language much more efficiently, which leads to substantially improved model performance. To improve the inference efficiency of Llama 3 models, we’ve adopted grouped query attention (GQA) across both the 8B and 70B sizes. We trained the models on sequences of 8,192 tokens, using a mask to ensure self-attention does not cross document boundaries.

Training data

To train the best language model, the curation of a large, high-quality training dataset is paramount. In line with our design principles, we invested heavily in pretraining data. Llama 3 is pretrained on over 15T tokens that were all collected from publicly available sources. Our training dataset is seven times larger than that used for Llama 2, and it includes four times more code. To prepare for upcoming multilingual use cases, over 5% of the Llama 3 pretraining dataset consists of high-quality non-English data that covers over 30 languages. However, we do not expect the same level of performance in these languages as in English.

To ensure Llama 3 is trained on data of the highest quality, we developed a series of data-filtering pipelines. These pipelines include using heuristic filters, NSFW filters, semantic deduplication approaches, and text classifiers to predict data quality. We found that previous generations of Llama are surprisingly good at identifying high-quality data, hence we used Llama 2 to generate the training data for the text-quality classifiers that are powering Llama 3.

We also performed extensive experiments to evaluate the best ways of mixing data from different sources in our final pretraining dataset. These experiments enabled us to select a data mix that ensures that Llama 3 performs well across use cases including trivia questions, STEM, coding, historical knowledge, etc.

Scaling up pretraining

To effectively leverage our pretraining data in Llama 3 models, we put substantial effort into scaling up pretraining. Specifically, we have developed a series of detailed scaling laws for downstream benchmark evaluations. These scaling laws enable us to select an optimal data mix and to make informed decisions on how to best use our training compute. Importantly, scaling laws allow us to predict the performance of our largest models on key tasks (for example, code generation as evaluated on the HumanEval benchmark—see above) before we actually train the models. This helps us ensure strong performance of our final models across a variety of use cases and capabilities.

We made several new observations on scaling behavior during the development of Llama 3. For example, while the Chinchilla-optimal amount of training compute for an 8B parameter model corresponds to ~200B tokens, we found that model performance continues to improve even after the model is trained on two orders of magnitude more data. Both our 8B and 70B parameter models continued to improve log-linearly after we trained them on up to 15T tokens. Larger models can match the performance of these smaller models with less training compute, but smaller models are generally preferred because they are much more efficient during inference.

To train our largest Llama 3 models, we combined three types of parallelization: data parallelization, model parallelization, and pipeline parallelization. Our most efficient implementation achieves a compute utilization of over 400 TFLOPS per GPU when trained on 16K GPUs simultaneously. We performed training runs on two custom-built 24K GPU clusters . To maximize GPU uptime, we developed an advanced new training stack that automates error detection, handling, and maintenance. We also greatly improved our hardware reliability and detection mechanisms for silent data corruption, and we developed new scalable storage systems that reduce overheads of checkpointing and rollback. Those improvements resulted in an overall effective training time of more than 95%. Combined, these improvements increased the efficiency of Llama 3 training by ~three times compared to Llama 2.

Instruction fine-tuning

To fully unlock the potential of our pretrained models in chat use cases, we innovated on our approach to instruction-tuning as well. Our approach to post-training is a combination of supervised fine-tuning (SFT), rejection sampling, proximal policy optimization (PPO), and direct preference optimization (DPO). The quality of the prompts that are used in SFT and the preference rankings that are used in PPO and DPO has an outsized influence on the performance of aligned models. Some of our biggest improvements in model quality came from carefully curating this data and performing multiple rounds of quality assurance on annotations provided by human annotators.

Learning from preference rankings via PPO and DPO also greatly improved the performance of Llama 3 on reasoning and coding tasks. We found that if you ask a model a reasoning question that it struggles to answer, the model will sometimes produce the right reasoning trace: The model knows how to produce the right answer, but it does not know how to select it. Training on preference rankings enables the model to learn how to select it.

Building with Llama 3

Our vision is to enable developers to customize Llama 3 to support relevant use cases and to make it easier to adopt best practices and improve the open ecosystem. With this release, we’re providing new trust and safety tools including updated components with both Llama Guard 2 and Cybersec Eval 2, and the introduction of Code Shield—an inference time guardrail for filtering insecure code produced by LLMs.

We’ve also co-developed Llama 3 with torchtune , the new PyTorch-native library for easily authoring, fine-tuning, and experimenting with LLMs. torchtune provides memory efficient and hackable training recipes written entirely in PyTorch. The library is integrated with popular platforms such as Hugging Face, Weights & Biases, and EleutherAI and even supports Executorch for enabling efficient inference to be run on a wide variety of mobile and edge devices. For everything from prompt engineering to using Llama 3 with LangChain we have a comprehensive getting started guide and takes you from downloading Llama 3 all the way to deployment at scale within your generative AI application.

A system-level approach to responsibility

We have designed Llama 3 models to be maximally helpful while ensuring an industry leading approach to responsibly deploying them. To achieve this, we have adopted a new, system-level approach to the responsible development and deployment of Llama. We envision Llama models as part of a broader system that puts the developer in the driver’s seat. Llama models will serve as a foundational piece of a system that developers design with their unique end goals in mind.

Instruction fine-tuning also plays a major role in ensuring the safety of our models. Our instruction-fine-tuned models have been red-teamed (tested) for safety through internal and external efforts. Our red teaming approach leverages human experts and automation methods to generate adversarial prompts that try to elicit problematic responses. For instance, we apply comprehensive testing to assess risks of misuse related to Chemical, Biological, Cyber Security, and other risk areas. All of these efforts are iterative and used to inform safety fine-tuning of the models being released. You can read more about our efforts in the model card .

Llama Guard models are meant to be a foundation for prompt and response safety and can easily be fine-tuned to create a new taxonomy depending on application needs. As a starting point, the new Llama Guard 2 uses the recently announced MLCommons taxonomy, in an effort to support the emergence of industry standards in this important area. Additionally, CyberSecEval 2 expands on its predecessor by adding measures of an LLM’s propensity to allow for abuse of its code interpreter, offensive cybersecurity capabilities, and susceptibility to prompt injection attacks (learn more in our technical paper ). Finally, we’re introducing Code Shield which adds support for inference-time filtering of insecure code produced by LLMs. This offers mitigation of risks around insecure code suggestions, code interpreter abuse prevention, and secure command execution.

With the speed at which the generative AI space is moving, we believe an open approach is an important way to bring the ecosystem together and mitigate these potential harms. As part of that, we’re updating our Responsible Use Guide (RUG) that provides a comprehensive guide to responsible development with LLMs. As we outlined in the RUG, we recommend that all inputs and outputs be checked and filtered in accordance with content guidelines appropriate to the application. Additionally, many cloud service providers offer content moderation APIs and other tools for responsible deployment, and we encourage developers to also consider using these options.

Deploying Llama 3 at scale

Llama 3 will soon be available on all major platforms including cloud providers, model API providers, and much more. Llama 3 will be everywhere .

Our benchmarks show the tokenizer offers improved token efficiency, yielding up to 15% fewer tokens compared to Llama 2. Also, Group Query Attention (GQA) now has been added to Llama 3 8B as well. As a result, we observed that despite the model having 1B more parameters compared to Llama 2 7B, the improved tokenizer efficiency and GQA contribute to maintaining the inference efficiency on par with Llama 2 7B.

For examples of how to leverage all of these capabilities, check out Llama Recipes which contains all of our open source code that can be leveraged for everything from fine-tuning to deployment to model evaluation.

What’s next for Llama 3?

The Llama 3 8B and 70B models mark the beginning of what we plan to release for Llama 3. And there’s a lot more to come.

Our largest models are over 400B parameters and, while these models are still training, our team is excited about how they’re trending. Over the coming months, we’ll release multiple models with new capabilities including multimodality, the ability to converse in multiple languages, a much longer context window, and stronger overall capabilities. We will also publish a detailed research paper once we are done training Llama 3.

To give you a sneak preview for where these models are today as they continue training, we thought we could share some snapshots of how our largest LLM model is trending. Please note that this data is based on an early checkpoint of Llama 3 that is still training and these capabilities are not supported as part of the models released today.

We’re committed to the continued growth and development of an open AI ecosystem for releasing our models responsibly. We have long believed that openness leads to better, safer products, faster innovation, and a healthier overall market. This is good for Meta, and it is good for society. We’re taking a community-first approach with Llama 3, and starting today, these models are available on the leading cloud, hosting, and hardware platforms with many more to come.

Try Meta Llama 3 today

We’ve integrated our latest models into Meta AI, which we believe is the world’s leading AI assistant. It’s now built with Llama 3 technology and it’s available in more countries across our apps.

You can use Meta AI on Facebook, Instagram, WhatsApp, Messenger, and the web to get things done, learn, create, and connect with the things that matter to you. You can read more about the Meta AI experience here .

Visit the Llama 3 website to download the models and reference the Getting Started Guide for the latest list of all available platforms.

You’ll also soon be able to test multimodal Meta AI on our Ray-Ban Meta smart glasses.

As always, we look forward to seeing all the amazing products and experiences you will build with Meta Llama 3.

Our latest updates delivered to your inbox

Subscribe to our newsletter to keep up with Meta AI news, events, research breakthroughs, and more.

Join us in the pursuit of what’s possible with AI.

Product experiences

Foundational models

Latest news

Meta © 2024

IMAGES

VIDEO

COMMENTS

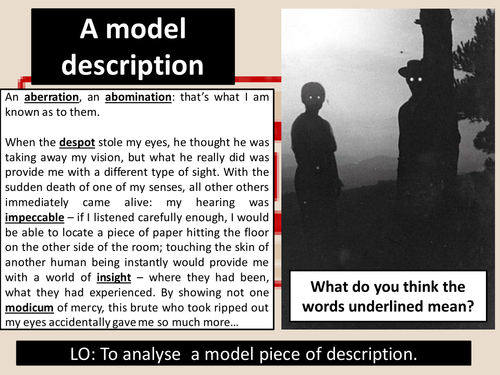

In Paper 1 Question 5 you will be presented with a choice of two writing tasks and a stimulus image. One task will ask you to write descriptively, most likely based on the image, and the other question will ask you to write a story, based on a statement or title.

Question Format. For Question 5 in the AQA GCSE English Language exam, you have a choice of two questions to answer. You can either write a description based on an image, or you can write a story with a title relevant to the theme of the paper. On the right is an example question in the same format that you will find in your exam.

1. English Language Paper 1. Explorations in creative reading and writing 1 hour 45 minutes. Revision Guide. This guide gives you: Examples of questions and model answers Mark-schemes and tips Suggested timings Questions for you to have a go at yourself Terminology Guide. S. Gunter 2019. 2.

Choosing an unusual narrator can make your writing more original, but just as important is the tone or the voice you adopt. Read this opening to the novel High Fidelity by Nick Hornby. My desert-island, all time, top five most memorable split-ups, in chronological order: 1) Alison Ashworth 2) Penny Hardwick 3) Jackie Allen 4) Charlie Nicholson

Join my £10 GCSE 2024 Exams Masterclass. Enter Your GCSE Exams Feeling CONFIDENT & READY! https://www.firstratetutors.com/gcse-classes Sign up for our GCSE A...

Information. The marks for questions are shown in brackets. The maximum mark for this paper is 80. There are 40 marks for Section A and 40 marks for Section B. You are reminded of the need for good English and clear presentation in your answers. You will be assessed on the quality of your reading in Section A.

Tips and strategies for writing a high scoring GCSE creative writing paper: 1. Learn the formats. Know the different formats and conventions of the different GCSE writing tasks. There is a standard layout for a leaflet, for example, where including contact details and a series of bullet points is part of the mark scheme.

Written By Lottie Ingham. Creative writing can be fun but it can also seem daunting in an assessment setting. Have a go at a Question 5 Paper 1 question using the planning sheet below to help build your ideas. Download Worksheet. Want to submit your full creative writing piece for marking?

This resource is a PDF file containing 50 original prompts and questions for teaching/practising creative writing.Each page is one 'AQA Language Paper 1, Question 5'-style question, with a choice of a descriptive or narrative response.. There are a range of images, some more abstract and challenging than others, to suit students of all abilities.

11+ creative writing questions from real papers—non-fiction prompts. Write a thank you letter for a present you didn't want. You are about to interview someone for a job. Write a list of questions you would like to ask the applicant. Write a letter to complain about the uniform at your school.

pptx, 10.15 MB. pdf, 7.14 MB. pptx, 54.34 MB. In this bumper pack of practice questions, there are over ninety creative writing questions in the style of AQA's GCSE English Language Paper One Question Five (Q5), as well as two revision lessons. The practice exam questions - on a range of topics - can be given to students as extra revision ...

Paper 1 Question 3: Model Answer. In Question 3, you will be set a question that asks you to comment on the whole of the source text in Section A. The text will always be a prose text from either the 20th or 21st century. You will be asked to consider how the writer has structured the text to interest you as a reader.

The class 12 sample paper solutions for Creative Writing subject includes the correct answers of all questions asked in the model question paper. It is also known as the CBSE sample paper Creative Writing class 12 marking scheme as it also conveys how many marks you will get for steps or keywords in the answer.

Here's a descriptive writing example answer that I completed in timed conditions for AQA English Language Paper 1, Question 5. This question is worth HALF of your marks for the entire paper, so getting it right is crucial to receiving a high grade overall for your English GCSE. Underneath the answer, I'll provide some feedback and analysis ...

GCSE Creative Writing Model-Style Answer. Subject: English. Age range: 14-16. Resource type: Other. File previews. pdf, 413.16 KB. A FREE resource that showcases the style of what a model answer to a creative writing question at GCSE (9-1) English Language would look like - SUITABLE TO ALL EXAM BOARDS. N.B. The length of the resource is NOT ...

Language Paper 1: Question 5 Descriptive Writing Write a description of a circus as suggested by this picture: LAYOUT Panoramic- describe the scene, broadly. Introduce the time and atmosphere. Zoom- focus your lens in on one segment of the image (draw a box) Single line - emphasise the key feeling of your description in one line, apart ...

CRITICAL THINKING AND CREATIVE WRITING OPEN ELECTIVE PAPER - I (As per National Education Policy 2020) I SEMESTER Undergraduate courses Chief Editor Dr. T.N. THANDAVA GOWDA PRASARANGA BENGALURU CITY UNIVERSITY, BENGALURU . 3 ... 11.Model Question Paper ….. 79 . 4 UNIT- 1

AQA GCSE English Language Paper 1 Question 5 Model creative responses. Subject: English. Age range: 14-16. Resource type: Lesson (complete) File previews. ppt, 757.5 KB. A resource designed to allow students to analyse the components of a model answer and apply it to their own creative writing. This can be bought as part of a package as well.

Student Models. When you need an example written by a student, check out our vast collection of free student models. Scroll through the list, or search for a mode of writing such as "explanatory" or "persuasive.".

For eight years, we at The Learning Network have been publishing short, accessible, image-driven prompts that invite students to do a variety of kinds of writing via our Picture Prompts column ...

Today, we're introducing Meta Llama 3, the next generation of our state-of-the-art open source large language model. Llama 3 models will soon be available on AWS, Databricks, Google Cloud, Hugging Face, Kaggle, IBM WatsonX, Microsoft Azure, NVIDIA NIM, and Snowflake, and with support from hardware platforms offered by AMD, AWS, Dell, Intel, NVIDIA, and Qualcomm.