Critical thinking

We’ve already established that information can be biased. Now it’s time to look at our own bias.

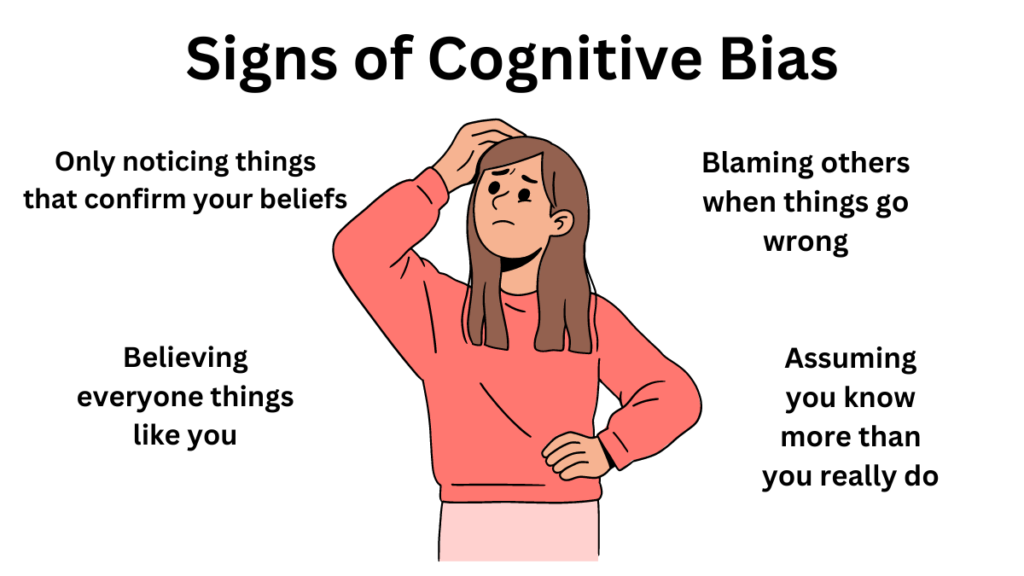

Studies have shown that we are more likely to accept information when it fits into our existing worldview, a phenomenon known as confirmation or myside bias (for examples see Kappes et al., 2020 ; McCrudden & Barnes, 2016 ; Pilditch & Custers, 2018 ). Wittebols (2019) defines it as a “tendency to be psychologically invested in the familiar and what we believe and less receptive to information that contradicts what we believe” (p. 211). Quite simply, we may reject information that doesn’t support our existing thinking.

This can manifest in a number of ways with Hahn and Harris (2014) suggesting four main behaviours:

- Searching only for information that supports our held beliefs

- Failing to critically evaluate information that supports our held beliefs - accepting it at face value - while explaining away or being overly critical of information that might contradict them

- Becoming set in our thinking, once an opinion has been formed, and deliberately ignoring any new information on the topic

- A tendency to be overconfident with the validity of our held beliefs.

Peters (2020) also suggests that we’re more likely to remember information that supports our way of thinking, further cementing our bias. Taken together, the research suggests that bias has a huge impact on the way we think. To learn more about how and why bias can impact our everyday thinking, watch this short video.

Filter bubbles and echo chambers

The theory of filter bubbles emerged in 2011, proposed by an Internet activist, Eli Pariser. He defined it as “your own personal unique world of information that you live in online” ( Pariser, 2011, 4:21 ). At the time that Pariser proposed the filter bubble theory, he focused on the impact of algorithms, connected with social media platforms and search engines, which prioritised content and personalised results based on the individuals past online activity, suggesting “the Internet is showing us what it thinks we want to see, but not necessarily what we should see” (Pariser, 2011, 3:47. Watch his TED talk if you’d like to know more).

Our understanding of filter bubbles has now expanded to recognise that individuals also select and create their own filter bubbles. This happens when you seek out likeminded individuals or sources; follow your friends or people you admire on social media; people that you’re likely to share common beliefs, points-of-view, and interests with. Barack Obama (2017) addressed the concept of filter bubbles in his presidential farewell address:

For too many of us it’s become safer to retreat into our own bubbles, whether in our neighbourhoods, or on college campuses, or places of worship, or especially our social media feeds, surrounded by people who look like us and share the same political outlook and never challenge our assumptions… Increasingly we become so secure in our bubbles that we start accepting only information, whether it’s true or not, that fits our opinions, instead of basing our opinions on the evidence that is out there. ( Obama, 2017, 22:57 ).

Filter bubbles are not unique to the social media age. Previously, the term echo chamber was used to describe the same phenomenon in the news media where different channels exist, catering to different points of view. Within an echo chamber, people are able to seek out information that supports their existing beliefs, without encountering information that might challenge, contradict or oppose.

Other forms of bias

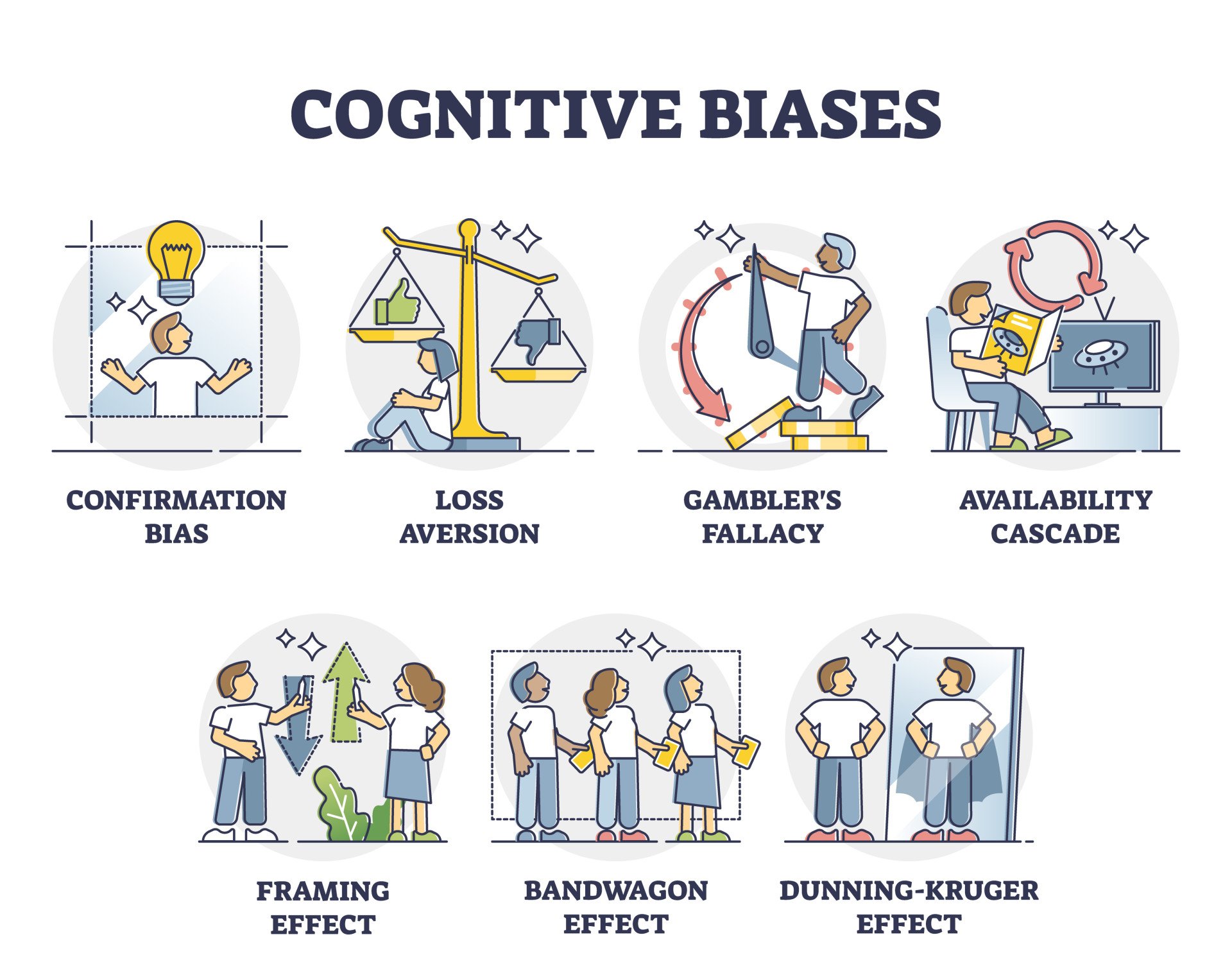

There are many different ways in which bias can affect the way you think and how you process new information. Try the quiz below to discover some additional forms of bias, or check out Buzzfeed’s 2017 article on cognitive bias.

Cognitive Bias: How We Are Wired to Misjudge

Charlotte Ruhl

Research Assistant & Psychology Graduate

BA (Hons) Psychology, Harvard University

Charlotte Ruhl, a psychology graduate from Harvard College, boasts over six years of research experience in clinical and social psychology. During her tenure at Harvard, she contributed to the Decision Science Lab, administering numerous studies in behavioral economics and social psychology.

Learn about our Editorial Process

Saul Mcleod, PhD

Editor-in-Chief for Simply Psychology

BSc (Hons) Psychology, MRes, PhD, University of Manchester

Saul Mcleod, PhD., is a qualified psychology teacher with over 18 years of experience in further and higher education. He has been published in peer-reviewed journals, including the Journal of Clinical Psychology.

Olivia Guy-Evans, MSc

Associate Editor for Simply Psychology

BSc (Hons) Psychology, MSc Psychology of Education

Olivia Guy-Evans is a writer and associate editor for Simply Psychology. She has previously worked in healthcare and educational sectors.

On This Page:

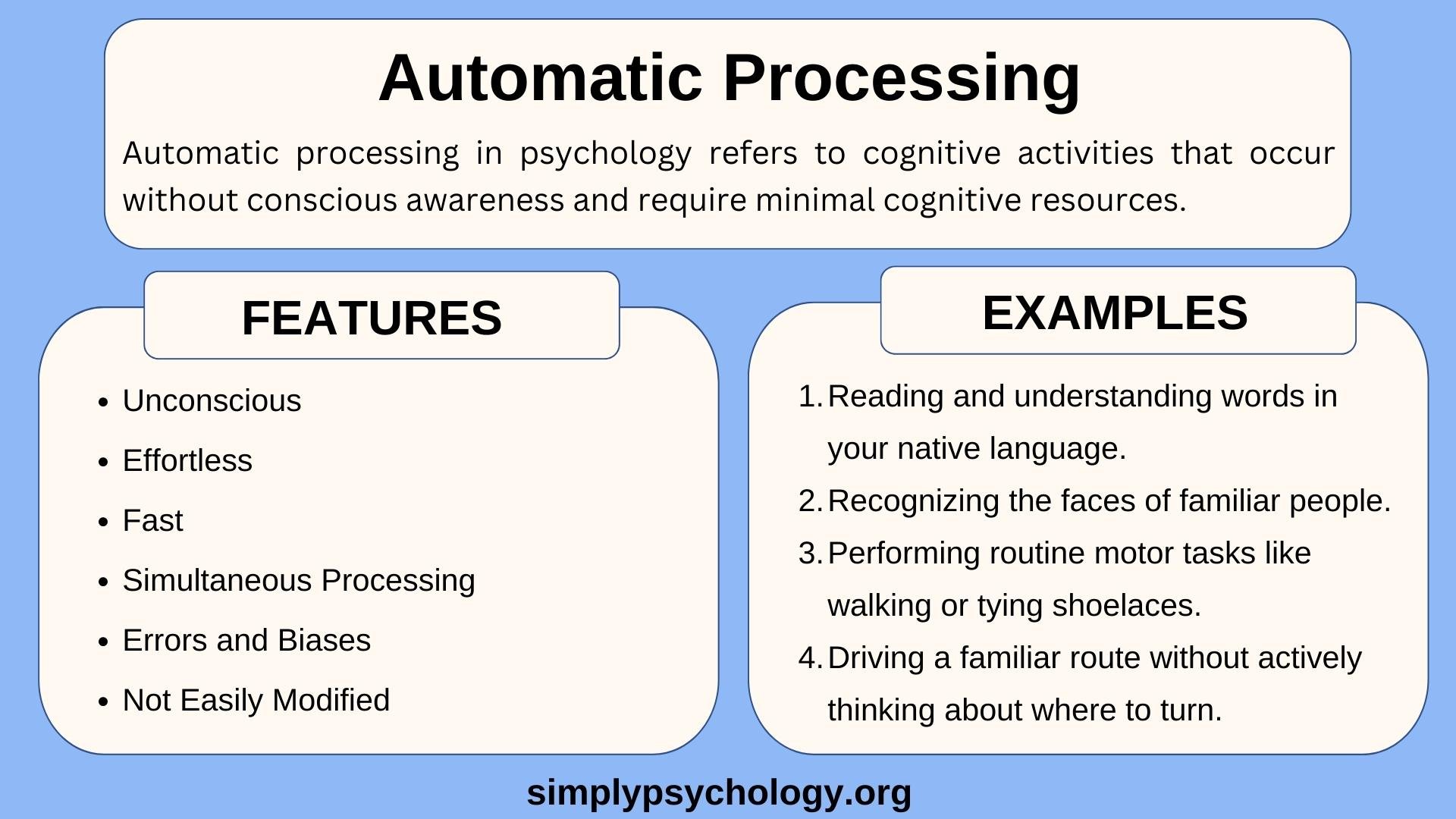

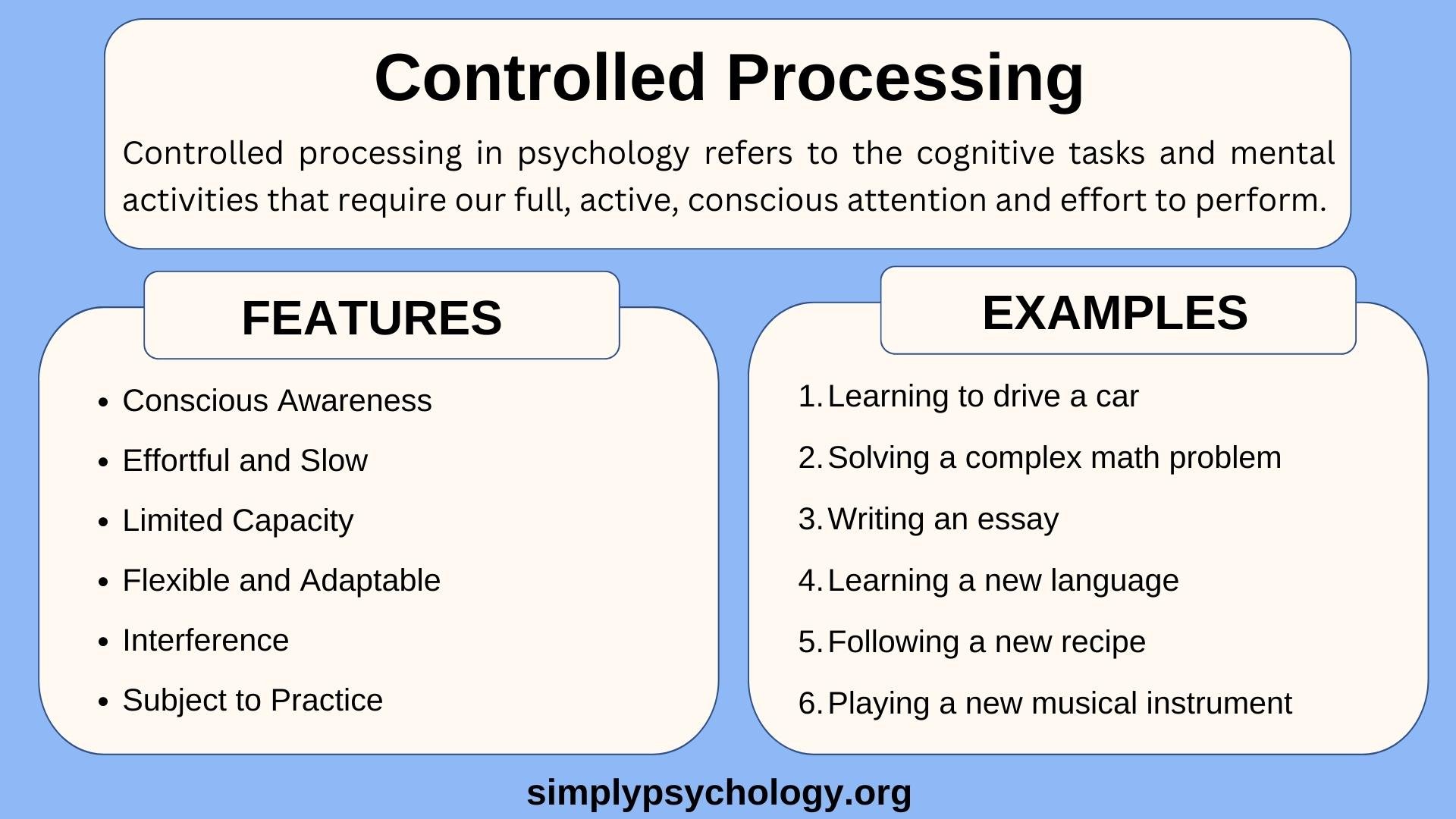

Have you ever been so busy talking on the phone that you don’t notice the light has turned green and it is your turn to cross the street?

Have you ever shouted, “I knew that was going to happen!” after your favorite baseball team gave up a huge lead in the ninth inning and lost?

Or have you ever found yourself only reading news stories that further support your opinion?

These are just a few of the many instances of cognitive bias that we experience every day of our lives. But before we dive into these different biases, let’s backtrack first and define what bias is.

What is Cognitive Bias?

Cognitive bias is a systematic error in thinking, affecting how we process information, perceive others, and make decisions. It can lead to irrational thoughts or judgments and is often based on our perceptions, memories, or individual and societal beliefs.

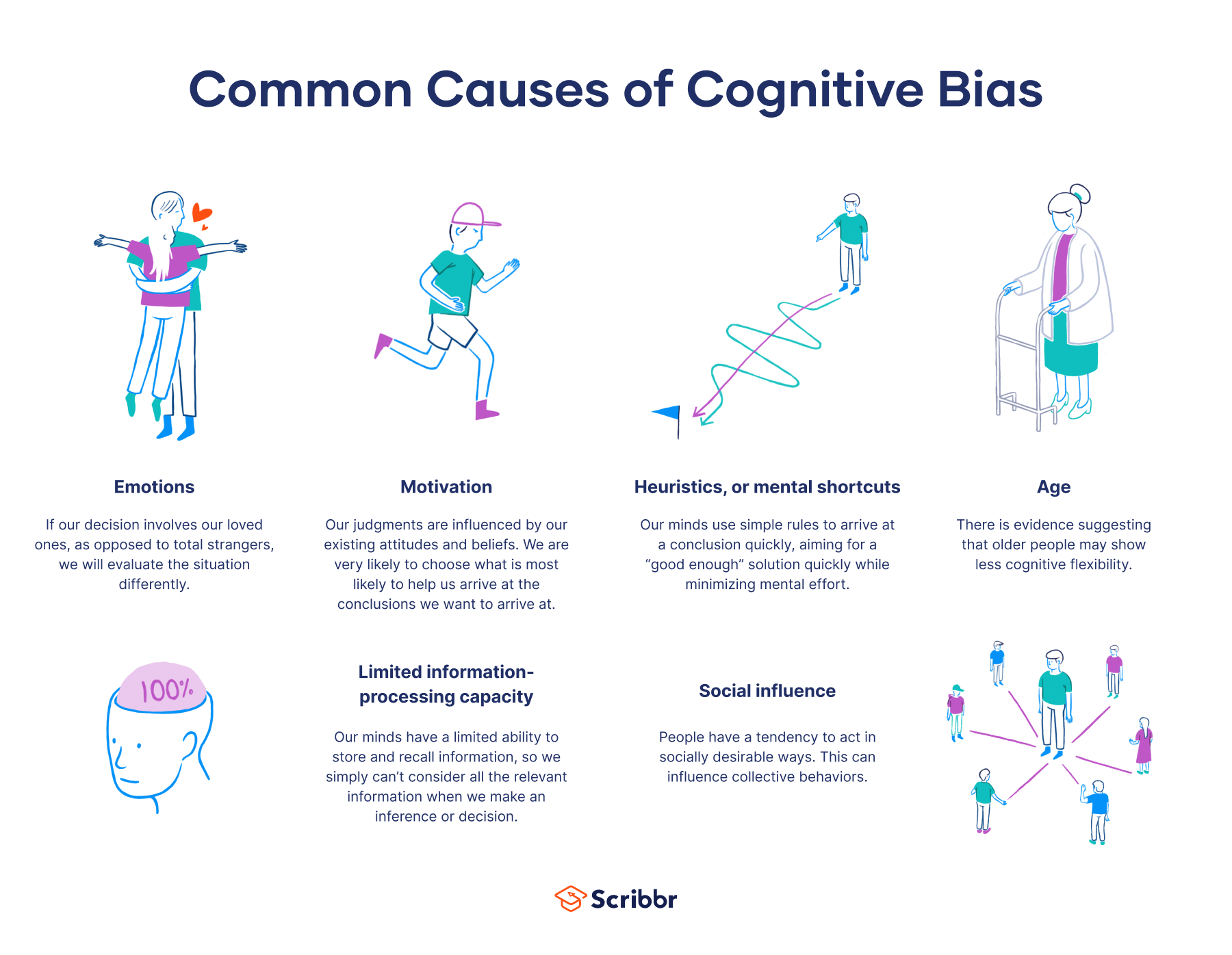

Biases are unconscious and automatic processes designed to make decision-making quicker and more efficient. Cognitive biases can be caused by many things, such as heuristics (mental shortcuts) , social pressures, and emotions.

Broadly speaking, bias is a tendency to lean in favor of or against a person, group, idea, or thing, usually in an unfair way. Biases are natural — they are a product of human nature — and they don’t simply exist in a vacuum or in our minds — they affect the way we make decisions and act.

In psychology, there are two main branches of biases: conscious and unconscious. Conscious or explicit bias is intentional — you are aware of your attitudes and the behaviors resulting from them (Lang, 2019).

Explicit bias can be good because it helps provide you with a sense of identity and can lead you to make good decisions (for example, being biased towards healthy foods).

However, these biases can often be dangerous when they take the form of conscious stereotyping.

On the other hand, unconscious bias , or cognitive bias, represents a set of unintentional biases — you are unaware of your attitudes and behaviors resulting from them (Lang, 2019).

Cognitive bias is often a result of your brain’s attempt to simplify information processing — we receive roughly 11 million bits of information per second. Still, we can only process about 40 bits of information per second (Orzan et al., 2012).

Therefore, we often rely on mental shortcuts (called heuristics) to help make sense of the world with relative speed. As such, these errors tend to arise from problems related to thinking: memory, attention, and other mental mistakes.

Cognitive biases can be beneficial because they do not require much mental effort and can allow you to make decisions relatively quickly, but like conscious biases, unconscious biases can also take the form of harmful prejudice that serves to hurt an individual or a group.

Although it may feel like there has been a recent rise of unconscious bias, especially in the context of police brutality and the Black Lives Matter movement, this is not a new phenomenon.

Thanks to Tversky and Kahneman (and several other psychologists who have paved the way), we now have an existing dictionary of our cognitive biases.

Again, these biases occur as an attempt to simplify the complex world and make information processing faster and easier. This section will dive into some of the most common forms of cognitive bias.

Confirmation Bias

Confirmation bias is the tendency to interpret new information as confirmation of your preexisting beliefs and opinions while giving disproportionately less consideration to alternative possibilities.

Real-World Examples

Since Watson’s 1960 experiment, real-world examples of confirmation bias have gained attention.

This bias often seeps into the research world when psychologists selectively interpret data or ignore unfavorable data to produce results that support their initial hypothesis.

Confirmation bias is also incredibly pervasive on the internet, particularly with social media. We tend to read online news articles that support our beliefs and fail to seek out sources that challenge them.

Various social media platforms, such as Facebook, help reinforce our confirmation bias by feeding us stories that we are likely to agree with – further pushing us down these echo chambers of political polarization.

Some examples of confirmation bias are especially harmful, specifically in the context of the law. For example, a detective may identify a suspect early in an investigation, seek out confirming evidence, and downplay falsifying evidence.

Experiments

The confirmation bias dates back to 1960 when Peter Wason challenged participants to identify a rule applying to triples of numbers.

People were first told that the sequences 2, 4, 6 fit the rule, and they then had to generate triples of their own and were told whether that sequence fits the rule. The rule was simple: any ascending sequence.

But not only did participants have an unusually difficult time realizing this and instead devised overly-complicated hypotheses, they also only generated triples that confirmed their preexisting hypothesis (Wason, 1960).

Explanations

But why does confirmation bias occur? It’s partially due to the effect of desire on our beliefs. In other words, certain desired conclusions (ones that support our beliefs) are more likely to be processed by the brain and labeled as true (Nickerson, 1998).

This motivational explanation is often coupled with a more cognitive theory.

The cognitive explanation argues that because our minds can only focus on one thing at a time, it is hard to parallel process (see information processing for more information) alternate hypotheses, so, as a result, we only process the information that aligns with our beliefs (Nickerson, 1998).

Another theory explains confirmation bias as a way of enhancing and protecting our self-esteem.

As with the self-serving bias (see more below), our minds choose to reinforce our preexisting ideas because being right helps preserve our sense of self-esteem, which is important for feeling secure in the world and maintaining positive relationships (Casad, 2019).

Although confirmation bias has obvious consequences, you can still work towards overcoming it by being open-minded and willing to look at situations from a different perspective than you might be used to (Luippold et al., 2015).

Even though this bias is unconscious, training your mind to become more flexible in its thought patterns will help mitigate the effects of this bias.

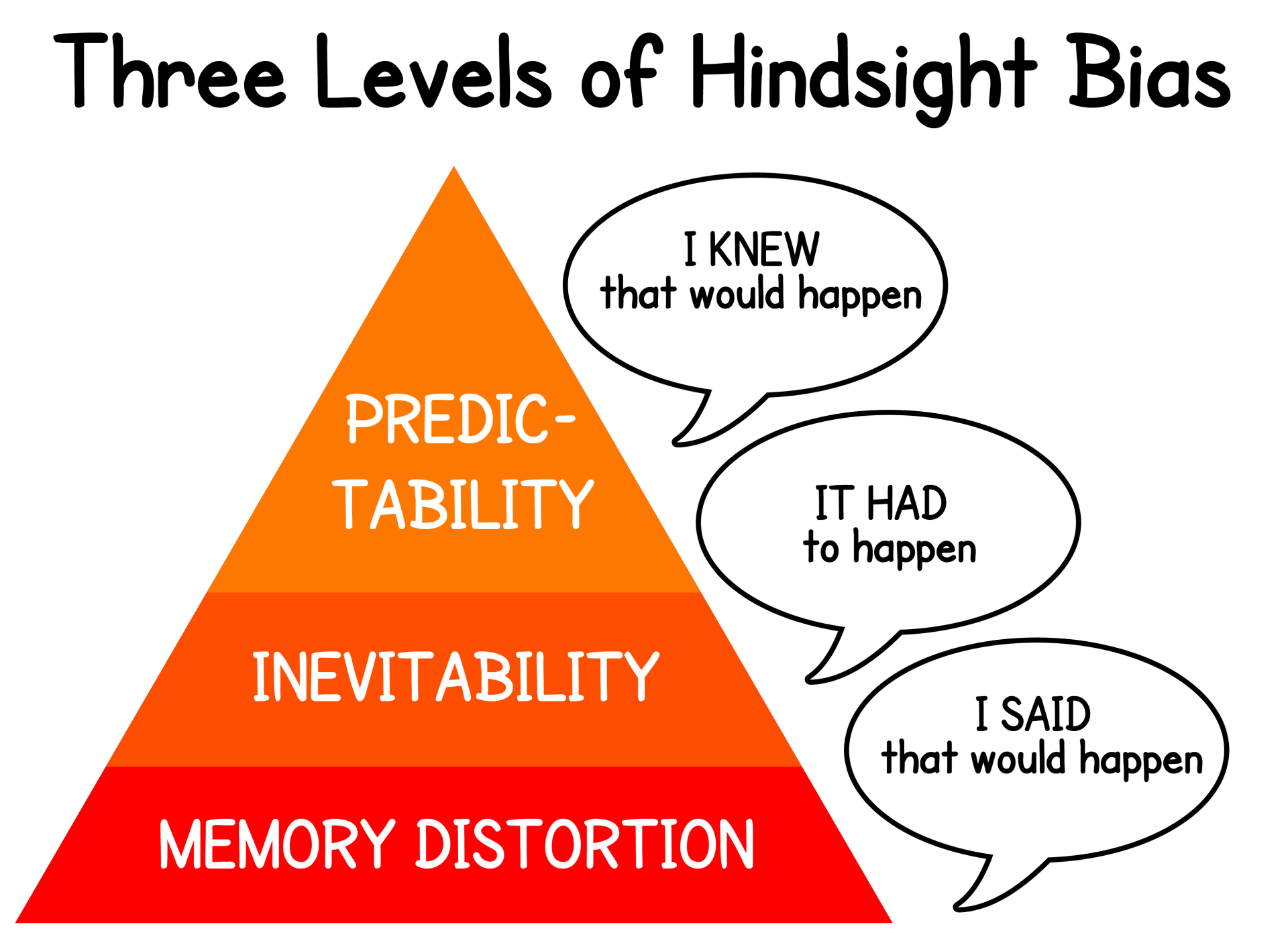

Hindsight Bias

Hindsight bias refers to the tendency to perceive past events as more predictable than they actually were (Roese & Vohs, 2012). There are cognitive and motivational explanations for why we ascribe so much certainty to knowing the outcome of an event only once the event is completed.

When sports fans know the outcome of a game, they often question certain decisions coaches make that they otherwise would not have questioned or second-guessed.

And fans are also quick to remark that they knew their team was going to win or lose, but, of course, they only make this statement after their team actually did win or lose.

Although research studies have demonstrated that the hindsight bias isn’t necessarily mitigated by pure recognition of the bias (Pohl & Hell, 1996).

You can still make a conscious effort to remind yourself that you can’t predict the future and motivate yourself to consider alternate explanations.

It’s important to do all we can to reduce this bias because when we are overly confident about our ability to predict outcomes, we might make future risky decisions that could have potentially dangerous outcomes.

Building on Tversky and Kahneman’s growing list of heuristics, researchers Baruch Fischhoff and Ruth Beyth-Marom (1975) were the first to directly investigate the hindsight bias in the empirical setting.

The team asked participants to judge the likelihood of several different outcomes of former U.S. president Richard Nixon’s visit to Beijing and Moscow.

After Nixon returned back to the States, participants were asked to recall the likelihood of each outcome they had initially assigned.

Fischhoff and Beyth found that for events that actually occurred, participants greatly overestimated the initial likelihood they assigned to those events.

That same year, Fischhoff (1975) introduced a new method for testing the hindsight bias – one that researchers still use today.

Participants are given a short story with four possible outcomes, and they are told that one is true. When they are then asked to assign the likelihood of each specific outcome, they regularly assign a higher likelihood to whichever outcome they have been told is true, regardless of how likely it actually is.

But hindsight bias does not only exist in artificial settings. In 1993, Dorothee Dietrich and Matthew Olsen asked college students to predict how the U.S. Senate would vote on the confirmation of Supreme Court nominee Clarence Thomas.

Before the vote, 58% of participants predicted that he would be confirmed, but after his actual confirmation, 78% of students said that they thought he would be approved – a prime example of the hindsight bias. And this form of bias extends beyond the research world.

From the cognitive perspective, hindsight bias may result from distortions of memories of what we knew or believed to know before an event occurred (Inman, 2016).

It is easier to recall information that is consistent with our current knowledge, so our memories become warped in a way that agrees with what actually did happen.

Motivational explanations of the hindsight bias point to the fact that we are motivated to live in a predictable world (Inman, 2016).

When surprising outcomes arise, our expectations are violated, and we may experience negative reactions as a result. Thus, we rely on the hindsight bias to avoid these adverse responses to certain unanticipated events and reassure ourselves that we actually did know what was going to happen.

Self-Serving Bias

Self-serving bias is the tendency to take personal responsibility for positive outcomes and blame external factors for negative outcomes.

You would be right to ask how this is similar to the fundamental attribution error (Ross, 1977), which identifies our tendency to overemphasize internal factors for other people’s behavior while attributing external factors to our own.

The distinction is that the self-serving bias is concerned with valence. That is, how good or bad an event or situation is. And it is also only concerned with events for which you are the actor.

In other words, if a driver cuts in front of you as the light turns green, the fundamental attribution error might cause you to think that they are a bad person and not consider the possibility that they were late for work.

On the other hand, the self-serving bias is exercised when you are the actor. In this example, you would be the driver cutting in front of the other car, which you would tell yourself is because you are late (an external attribution to a negative event) as opposed to it being because you are a bad person.

From sports to the workplace, self-serving bias is incredibly common. For example, athletes are quick to take responsibility for personal wins, attributing their successes to their hard work and mental toughness, but point to external factors, such as unfair calls or bad weather, when they lose (Allen et al., 2020).

In the workplace, people attribute internal factors when they have hired for a job but external factors when they are fired (Furnham, 1982). And in the office itself, workplace conflicts are given external attributions, and successes, whether a persuasive presentation or a promotion, are awarded internal explanations (Walther & Bazarova, 2007).

Additionally, self-serving bias is more prevalent in individualistic cultures , which place emphasis on self-esteem levels and individual goals, and it is less prevalent among individuals with depression (Mezulis et al., 2004), who are more likely to take responsibility for negative outcomes.

Overcoming this bias can be difficult because it is at the expense of our self-esteem. Nevertheless, practicing self-compassion – treating yourself with kindness even when you fall short or fail – can help reduce the self-serving bias (Neff, 2003).

The leading explanation for the self-serving bias is that it is a way of protecting our self-esteem (similar to one of the explanations for the confirmation bias).

We are quick to take credit for positive outcomes and divert the blame for negative ones to boost and preserve our individual ego, which is necessary for confidence and healthy relationships with others (Heider, 1982).

Another theory argues that self-serving bias occurs when surprising events arise. When certain outcomes run counter to our expectations, we ascribe external factors, but when outcomes are in line with our expectations, we attribute internal factors (Miller & Ross, 1975).

An extension of this theory asserts that we are naturally optimistic, so negative outcomes come as a surprise and receive external attributions as a result.

Anchoring Bias

individualistic cultures is closely related to the decision-making process. It occurs when we rely too heavily on either pre-existing information or the first piece of information (the anchor) when making a decision.

For example, if you first see a T-shirt that costs $1,000 and then see a second one that costs $100, you’re more likely to see the second shirt as cheap as you would if the first shirt you saw was $120. Here, the price of the first shirt influences how you view the second.

Sarah is looking to buy a used car. The first dealership she visits has a used sedan listed for $19,000. Sarah takes this initial listing price as an anchor and uses it to evaluate prices at other dealerships.

When she sees another similar used sedan priced at $18,000, that price seems like a good bargain compared to the $19,000 anchor price she saw first, even though the actual market value is closer to $16,000.

When Sarah finds a comparable used sedan priced at $15,500, she continues perceiving that price as cheap compared to her anchored reference price.

Ultimately, Sarah purchases the $18,000 sedan, overlooking that all of the prices seemed like bargains only in relation to the initial high anchor price.

The key elements that demonstrate anchoring bias here are:

- Sarah establishes an initial reference price based on the first listing she sees ($19k)

- She uses that initial price as her comparison/anchor for evaluating subsequent prices

- This biases her perception of the market value of the cars she looks at after the initial anchor is set

- She makes a purchase decision aligned with her anchored expectations rather than a more objective market value

Multiple theories seek to explain the existence of this bias.

One theory, known as anchoring and adjustment, argues that once an anchor is established, people insufficiently adjust away from it to arrive at their final answer, and so their final guess or decision is closer to the anchor than it otherwise would have been (Tversky & Kahneman, 1992).

And when people experience a greater cognitive load (the amount of information the working memory can hold at any given time; for example, a difficult decision as opposed to an easy one), they are more susceptible to the effects of anchoring.

Another theory, selective accessibility, holds that although we assume that the anchor is not a suitable answer (or a suitable price going back to the initial example) when we evaluate the second stimulus (or second shirt), we look for ways in which it is similar or different to the anchor (the price being way different), resulting in the anchoring effect (Mussweiler & Strack, 1999).

A final theory posits that providing an anchor changes someone’s attitudes to be more favorable to the anchor, which then biases future answers to have similar characteristics as the initial anchor.

Although there are many different theories for why we experience anchoring bias, they all agree that it affects our decisions in real ways (Wegner et al., 2001).

The first study that brought this bias to light was during one of Tversky and Kahneman’s (1974) initial experiments. They asked participants to compute the product of numbers 1-8 in five seconds, either as 1x2x3… or 8x7x6…

Participants did not have enough time to calculate the answer, so they had to estimate based on their first few calculations.

They found that those who computed the small multiplications first (i.e., 1x2x3…) gave a median estimate of 512, but those who computed the larger multiplications first gave a median estimate of 2,250 (although the actual answer is 40,320).

This demonstrates how the initial few calculations influenced the participant’s final answer.

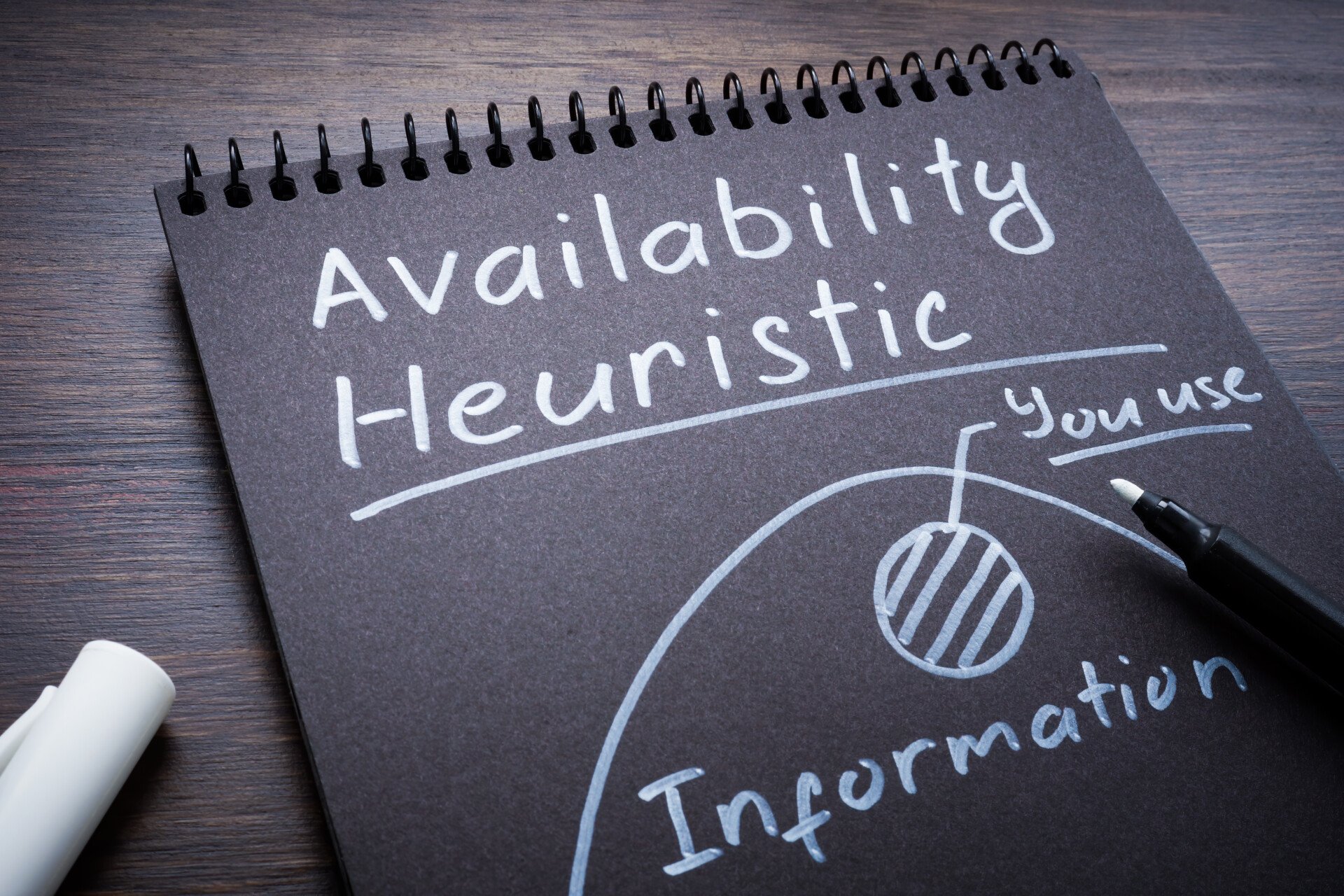

Availability Bias

Availability bias (also commonly referred to as the availability heuristic ) refers to the tendency to think that examples of things that readily come to mind are more common than what is actually the case.

In other words, information that comes to mind faster influences the decisions we make about the future. And just like with the hindsight bias, this bias is related to an error of memory.

But instead of being a memory fabrication, it is an overemphasis on a certain memory.

In the workplace, if someone is being considered for a promotion but their boss recalls one bad thing that happened years ago but left a lasting impression, that one event might have an outsized influence on the final decision.

Another common example is buying lottery tickets because the lifestyle and benefits of winning are more readily available in mind (and the potential emotions associated with winning or seeing other people win) than the complex probability calculation of actually winning the lottery (Cherry, 2019).

A final common example that is used to demonstrate the availability heuristic describes how seeing several television shows or news reports about shark attacks (or anything that is sensationalized by the news, such as serial killers or plane crashes) might make you think that this incident is relatively common even though it is not at all.

Regardless, this thinking might make you less inclined to go in the water the next time you go to the beach (Cherry, 2019).

As with most cognitive biases, the best way to overcome them is by recognizing the bias and being more cognizant of your thoughts and decisions.

And because we fall victim to this bias when our brain relies on quick mental shortcuts in order to save time, slowing down our thinking and decision-making process is a crucial step to mitigating the effects of the availability heuristic.

Researchers think this bias occurs because the brain is constantly trying to minimize the effort necessary to make decisions, and so we rely on certain memories – ones that we can recall more easily – instead of having to endure the complicated task of calculating statistical probabilities.

Two main types of memories are easier to recall: 1) those that more closely align with the way we see the world and 2) those that evoke more emotion and leave a more lasting impression.

This first type of memory was identified in 1973, when Tversky and Kahneman, our cognitive bias pioneers, conducted a study in which they asked participants if more words begin with the letter K or if more words have K as their third letter.

Although many more words have K as their third letter, 70% of participants said that more words begin with K because the ability to recall this is not only easier, but it more closely aligns with the way they see the world (knowing the first letter of any word is infinitely more common than the third letter of any word).

In terms of the second type of memory, the same duo ran an experiment in 1983, 10 years later, where half the participants were asked to guess the likelihood of a massive flood would occur somewhere in North America, and the other half had to guess the likelihood of a flood occurring due to an earthquake in California.

Although the latter is much less likely, participants still said that this would be much more common because they could recall specific, emotionally charged events of earthquakes hitting California, largely due to the news coverage they receive.

Together, these studies highlight how memories that are easier to recall greatly influence our judgments and perceptions about future events.

Inattentional Blindness

A final popular form of cognitive bias is inattentional blindness . This occurs when a person fails to notice a stimulus that is in plain sight because their attention is directed elsewhere.

For example, while driving a car, you might be so focused on the road ahead of you that you completely fail to notice a car swerve into your lane of traffic.

Because your attention is directed elsewhere, you aren’t able to react in time, potentially leading to a car accident. Experiencing inattentional blindness has its obvious consequences (as illustrated by this example), but, like all biases, it is not impossible to overcome.

Many theories seek to explain why we experience this form of cognitive bias. In reality, it is probably some combination of these explanations.

Conspicuity holds that certain sensory stimuli (such as bright colors) and cognitive stimuli (such as something familiar) are more likely to be processed, and so stimuli that don’t fit into one of these two categories might be missed.

The mental workload theory describes how when we focus a lot of our brain’s mental energy on one stimulus, we are using up our cognitive resources and won’t be able to process another stimulus simultaneously.

Similarly, some psychologists explain how we attend to different stimuli with varying levels of attentional capacity, which might affect our ability to process multiple stimuli simultaneously.

In other words, an experienced driver might be able to see that car swerve into the lane because they are using fewer mental resources to drive, whereas a beginner driver might be using more resources to focus on the road ahead and unable to process that car swerving in.

A final explanation argues that because our attentional and processing resources are limited, our brain dedicates them to what fits into our schemas or our cognitive representations of the world (Cherry, 2020).

Thus, when an unexpected stimulus comes into our line of sight, we might not be able to process it on the conscious level. The following example illustrates how this might happen.

The most famous study to demonstrate the inattentional blindness phenomenon is the invisible gorilla study (Most et al., 2001). This experiment asked participants to watch a video of two groups passing a basketball and count how many times the white team passed the ball.

Participants are able to accurately report the number of passes, but what they fail to notice is a gorilla walking directly through the middle of the circle.

Because this would not be expected, and because our brain is using up its resources to count the number of passes, we completely fail to process something right before our eyes.

A real-world example of inattentional blindness occurred in 1995 when Boston police officer Kenny Conley was chasing a suspect and ran by a group of officers who were mistakenly holding down an undercover cop.

Conley was convicted of perjury and obstruction of justice because he supposedly saw the fight between the undercover cop and the other officers and lied about it to protect the officers, but he stood by his word that he really hadn’t seen it (due to inattentional blindness) and was ultimately exonerated (Pickel, 2015).

The key to overcoming inattentional blindness is to maximize your attention by avoiding distractions such as checking your phone. And it is also important to pay attention to what other people might not notice (if you are that driver, don’t always assume that others can see you).

By working on expanding your attention and minimizing unnecessary distractions that will use up your mental resources, you can work towards overcoming this bias.

Preventing Cognitive Bias

As we know, recognizing these biases is the first step to overcoming them. But there are other small strategies we can follow in order to train our unconscious mind to think in different ways.

From strengthening our memory and minimizing distractions to slowing down our decision-making and improving our reasoning skills, we can work towards overcoming these cognitive biases.

An individual can evaluate his or her own thought process, also known as metacognition (“thinking about thinking”), which provides an opportunity to combat bias (Flavell, 1979).

This multifactorial process involves (Croskerry, 2003):

(a) acknowledging the limitations of memory, (b) seeking perspective while making decisions, (c) being able to self-critique, (d) choosing strategies to prevent cognitive error.

Many strategies used to avoid bias that we describe are also known as cognitive forcing strategies, which are mental tools used to force unbiased decision-making.

The History of Cognitive Bias

The term cognitive bias was first coined in the 1970s by Israeli psychologists Amos Tversky and Daniel Kahneman, who used this phrase to describe people’s flawed thinking patterns in response to judgment and decision problems (Tversky & Kahneman, 1974).

Tversky and Kahneman’s research program, the heuristics and biases program, investigated how people make decisions given limited resources (for example, limited time to decide which food to eat or limited information to decide which house to buy).

As a result of these limited resources, people are forced to rely on heuristics or quick mental shortcuts to help make their decisions.

Tversky and Kahneman wanted to understand the biases associated with this judgment and decision-making process.

To do so, the two researchers relied on a research paradigm that presented participants with some type of reasoning problem with a computed normative answer (they used probability theory and statistics to compute the expected answer).

Participants’ responses were then compared with the predetermined solution to reveal the systematic deviations in the mind.

After running several experiments with countless reasoning problems, the researchers were able to identify numerous norm violations that result when our minds rely on these cognitive biases to make decisions and judgments (Wilke & Mata, 2012).

Key Takeaways

- Cognitive biases are unconscious errors in thinking that arise from problems related to memory, attention, and other mental mistakes.

- These biases result from our brain’s efforts to simplify the incredibly complex world in which we live.

- Confirmation bias , hindsight bias, mere exposure effect , self-serving bias , base rate fallacy , anchoring bias , availability bias , the framing effect , inattentional blindness, and the ecological fallacy are some of the most common examples of cognitive bias. Another example is the false consensus effect .

- Cognitive biases directly affect our safety, interactions with others, and how we make judgments and decisions in our daily lives.

- Although these biases are unconscious, there are small steps we can take to train our minds to adopt a new pattern of thinking and mitigate the effects of these biases.

Allen, M. S., Robson, D. A., Martin, L. J., & Laborde, S. (2020). Systematic review and meta-analysis of self-serving attribution biases in the competitive context of organized sport. Personality and Social Psychology Bulletin, 46 (7), 1027-1043.

Casad, B. (2019). Confirmation bias . Retrieved from https://www.britannica.com/science/confirmation-bias

Cherry, K. (2019). How the availability heuristic affects your decision-making . Retrieved from https://www.verywellmind.com/availability-heuristic-2794824

Cherry, K. (2020). Inattentional blindness can cause you to miss things in front of you . Retrieved from https://www.verywellmind.com/what-is-inattentional-blindness-2795020

Dietrich, D., & Olson, M. (1993). A demonstration of hindsight bias using the Thomas confirmation vote. Psychological Reports, 72 (2), 377-378.

Fischhoff, B. (1975). Hindsight is not equal to foresight: The effect of outcome knowledge on judgment under uncertainty. Journal of Experimental Psychology: Human Perception and Performance, 1 (3), 288.

Fischhoff, B., & Beyth, R. (1975). I knew it would happen: Remembered probabilities of once—future things. Organizational Behavior and Human Performance, 13 (1), 1-16.

Furnham, A. (1982). Explanations for unemployment in Britain. European Journal of social psychology, 12(4), 335-352.

Heider, F. (1982). The psychology of interpersonal relations . Psychology Press.

Inman, M. (2016). Hindsight bias . Retrieved from https://www.britannica.com/topic/hindsight-bias

Lang, R. (2019). What is the difference between conscious and unconscious bias? : Faqs. Retrieved from https://engageinlearning.com/faq/compliance/unconscious-bias/what-is-the-difference-between-conscious-and-unconscious-bias/

Luippold, B., Perreault, S., & Wainberg, J. (2015). Auditor’s pitfall: Five ways to overcome confirmation bias . Retrieved from https://www.babson.edu/academics/executive-education/babson-insight/finance-and-accounting/auditors-pitfall-five-ways-to-overcome-confirmation-bias/

Mezulis, A. H., Abramson, L. Y., Hyde, J. S., & Hankin, B. L. (2004). Is there a universal positivity bias in attributions? A meta-analytic review of individual, developmental, and cultural differences in the self-serving attributional bias. Psychological Bulletin, 130 (5), 711.

Miller, D. T., & Ross, M. (1975). Self-serving biases in the attribution of causality: Fact or fiction?. Psychological Bulletin, 82 (2), 213.

Most, S. B., Simons, D. J., Scholl, B. J., Jimenez, R., Clifford, E., & Chabris, C. F. (2001). How not to be seen: The contribution of similarity and selective ignoring to sustained inattentional blindness. Psychological Science, 12 (1), 9-17.

Mussweiler, T., & Strack, F. (1999). Hypothesis-consistent testing and semantic priming in the anchoring paradigm: A selective accessibility model. Journal of Experimental Social Psychology, 35 (2), 136-164.

Neff, K. (2003). Self-compassion: An alternative conceptualization of a healthy attitude toward oneself. Self and Identity, 2 (2), 85-101.

Nickerson, R. S. (1998). Confirmation bias: A ubiquitous phenomenon in many guises. Review of General Psychology, 2 (2), 175-220.

Orzan, G., Zara, I. A., & Purcarea, V. L. (2012). Neuromarketing techniques in pharmaceutical drugs advertising. A discussion and agenda for future research. Journal of Medicine and Life, 5 (4), 428.

Pickel, K. L. (2015). Eyewitness memory. The handbook of attention , 485-502.

Pohl, R. F., & Hell, W. (1996). No reduction in hindsight bias after complete information and repeated testing. Organizational Behavior and Human Decision Processes, 67 (1), 49-58.

Roese, N. J., & Vohs, K. D. (2012). Hindsight bias. Perspectives on Psychological Science, 7 (5), 411-426.

Ross, L. (1977). The intuitive psychologist and his shortcomings: Distortions in the attribution process. In Advances in experimental social psychology (Vol. 10, pp. 173-220). Academic Press.

Tversky, A., & Kahneman, D. (1973). Availability: A heuristic for judging frequency and probability. Cognitive Psychology, 5 (2), 207-232.

Tversky, A., & Kahneman, D. (1974). Judgment under uncertainty: Heuristics and biases. Science, 185 (4157), 1124-1131.

Tversky, A., & Kahneman, D. (1983). Extensional versus intuitive reasoning: The conjunction fallacy in probability judgment. Psychological Review , 90(4), 293.

Tversky, A., & Kahneman, D. (1992). Advances in prospect theory: Cumulative representation of uncertainty. Journal of Risk and Uncertainty, 5 (4), 297-323.

Walther, J. B., & Bazarova, N. N. (2007). Misattribution in virtual groups: The effects of member distribution on self-serving bias and partner blame. Human Communication Research, 33 (1), 1-26.

Wason, Peter C. (1960), “On the failure to eliminate hypotheses in a conceptual task”. Quarterly Journal of Experimental Psychology, 12 (3): 129–40.

Wegener, D. T., Petty, R. E., Detweiler-Bedell, B. T., & Jarvis, W. B. G. (2001). Implications of attitude change theories for numerical anchoring: Anchor plausibility and the limits of anchor effectiveness. Journal of Experimental Social Psychology, 37 (1), 62-69.

Wilke, A., & Mata, R. (2012). Cognitive bias. In Encyclopedia of human behavior (pp. 531-535). Academic Press.

Further Information

Test yourself for bias.

- Project Implicit (IAT Test) From Harvard University

- Implicit Association Test From the Social Psychology Network

- Test Yourself for Hidden Bias From Teaching Tolerance

- How The Concept Of Implicit Bias Came Into Being With Dr. Mahzarin Banaji, Harvard University. Author of Blindspot: hidden biases of good people5:28 minutes; includes transcript

- Understanding Your Racial Biases With John Dovidio, PhD, Yale University From the American Psychological Association11:09 minutes; includes transcript

- Talking Implicit Bias in Policing With Jack Glaser, Goldman School of Public Policy, University of California Berkeley21:59 minutes

- Implicit Bias: A Factor in Health Communication With Dr. Winston Wong, Kaiser Permanente19:58 minutes

- Bias, Black Lives and Academic Medicine Dr. David Ansell on Your Health Radio (August 1, 2015)21:42 minutes

- Uncovering Hidden Biases Google talk with Dr. Mahzarin Banaji, Harvard University

- Impact of Implicit Bias on the Justice System 9:14 minutes

- Students Speak Up: What Bias Means to Them 2:17 minutes

- Weight Bias in Health Care From Yale University16:56 minutes

- Gender and Racial Bias In Facial Recognition Technology 4:43 minutes

Journal Articles

- An implicit bias primer Mitchell, G. (2018). An implicit bias primer. Virginia Journal of Social Policy & the Law , 25, 27–59.

- Implicit Association Test at age 7: A methodological and conceptual review Nosek, B. A., Greenwald, A. G., & Banaji, M. R. (2007). The Implicit Association Test at age 7: A methodological and conceptual review. Automatic processes in social thinking and behavior, 4 , 265-292.

- Implicit Racial/Ethnic Bias Among Health Care Professionals and Its Influence on Health Care Outcomes: A Systematic Review Hall, W. J., Chapman, M. V., Lee, K. M., Merino, Y. M., Thomas, T. W., Payne, B. K., … & Coyne-Beasley, T. (2015). Implicit racial/ethnic bias among health care professionals and its influence on health care outcomes: a systematic review. American journal of public health, 105 (12), e60-e76.

- Reducing Racial Bias Among Health Care Providers: Lessons from Social-Cognitive Psychology Burgess, D., Van Ryn, M., Dovidio, J., & Saha, S. (2007). Reducing racial bias among health care providers: lessons from social-cognitive psychology. Journal of general internal medicine, 22 (6), 882-887.

- Integrating implicit bias into counselor education Boysen, G. A. (2010). Integrating Implicit Bias Into Counselor Education. Counselor Education & Supervision, 49 (4), 210–227.

- Cognitive Biases and Errors as Cause—and Journalistic Best Practices as Effect Christian, S. (2013). Cognitive Biases and Errors as Cause—and Journalistic Best Practices as Effect. Journal of Mass Media Ethics, 28 (3), 160–174.

- Empathy intervention to reduce implicit bias in pre-service teachers Whitford, D. K., & Emerson, A. M. (2019). Empathy Intervention to Reduce Implicit Bias in Pre-Service Teachers. Psychological Reports, 122 (2), 670–688.

Related Articles

Cognitive Psychology

Automatic Processing in Psychology: Definition & Examples

Controlled Processing in Psychology: Definition & Examples

How Ego Depletion Can Drain Your Willpower

What is the Default Mode Network?

Theories of Selective Attention in Psychology

Availability Heuristic and Decision Making

- Table of Contents

- New in this Archive

- Chronological

- Editorial Information

- About the SEP

- Editorial Board

- How to Cite the SEP

- Special Characters

- Support the SEP

- PDFs for SEP Friends

- Make a Donation

- SEPIA for Libraries

- Entry Contents

Bibliography

Academic tools.

- Friends PDF Preview

- Author and Citation Info

- Back to Top

Critical Thinking

Critical thinking is a widely accepted educational goal. Its definition is contested, but the competing definitions can be understood as differing conceptions of the same basic concept: careful thinking directed to a goal. Conceptions differ with respect to the scope of such thinking, the type of goal, the criteria and norms for thinking carefully, and the thinking components on which they focus. Its adoption as an educational goal has been recommended on the basis of respect for students’ autonomy and preparing students for success in life and for democratic citizenship. “Critical thinkers” have the dispositions and abilities that lead them to think critically when appropriate. The abilities can be identified directly; the dispositions indirectly, by considering what factors contribute to or impede exercise of the abilities. Standardized tests have been developed to assess the degree to which a person possesses such dispositions and abilities. Educational intervention has been shown experimentally to improve them, particularly when it includes dialogue, anchored instruction, and mentoring. Controversies have arisen over the generalizability of critical thinking across domains, over alleged bias in critical thinking theories and instruction, and over the relationship of critical thinking to other types of thinking.

2.1 Dewey’s Three Main Examples

2.2 dewey’s other examples, 2.3 further examples, 2.4 non-examples, 3. the definition of critical thinking, 4. its value, 5. the process of thinking critically, 6. components of the process, 7. contributory dispositions and abilities, 8.1 initiating dispositions, 8.2 internal dispositions, 9. critical thinking abilities, 10. required knowledge, 11. educational methods, 12.1 the generalizability of critical thinking, 12.2 bias in critical thinking theory and pedagogy, 12.3 relationship of critical thinking to other types of thinking, other internet resources, related entries.

Use of the term ‘critical thinking’ to describe an educational goal goes back to the American philosopher John Dewey (1910), who more commonly called it ‘reflective thinking’. He defined it as

active, persistent and careful consideration of any belief or supposed form of knowledge in the light of the grounds that support it, and the further conclusions to which it tends. (Dewey 1910: 6; 1933: 9)

and identified a habit of such consideration with a scientific attitude of mind. His lengthy quotations of Francis Bacon, John Locke, and John Stuart Mill indicate that he was not the first person to propose development of a scientific attitude of mind as an educational goal.

In the 1930s, many of the schools that participated in the Eight-Year Study of the Progressive Education Association (Aikin 1942) adopted critical thinking as an educational goal, for whose achievement the study’s Evaluation Staff developed tests (Smith, Tyler, & Evaluation Staff 1942). Glaser (1941) showed experimentally that it was possible to improve the critical thinking of high school students. Bloom’s influential taxonomy of cognitive educational objectives (Bloom et al. 1956) incorporated critical thinking abilities. Ennis (1962) proposed 12 aspects of critical thinking as a basis for research on the teaching and evaluation of critical thinking ability.

Since 1980, an annual international conference in California on critical thinking and educational reform has attracted tens of thousands of educators from all levels of education and from many parts of the world. Also since 1980, the state university system in California has required all undergraduate students to take a critical thinking course. Since 1983, the Association for Informal Logic and Critical Thinking has sponsored sessions in conjunction with the divisional meetings of the American Philosophical Association (APA). In 1987, the APA’s Committee on Pre-College Philosophy commissioned a consensus statement on critical thinking for purposes of educational assessment and instruction (Facione 1990a). Researchers have developed standardized tests of critical thinking abilities and dispositions; for details, see the Supplement on Assessment . Educational jurisdictions around the world now include critical thinking in guidelines for curriculum and assessment. Political and business leaders endorse its importance.

For details on this history, see the Supplement on History .

2. Examples and Non-Examples

Before considering the definition of critical thinking, it will be helpful to have in mind some examples of critical thinking, as well as some examples of kinds of thinking that would apparently not count as critical thinking.

Dewey (1910: 68–71; 1933: 91–94) takes as paradigms of reflective thinking three class papers of students in which they describe their thinking. The examples range from the everyday to the scientific.

Transit : “The other day, when I was down town on 16th Street, a clock caught my eye. I saw that the hands pointed to 12:20. This suggested that I had an engagement at 124th Street, at one o'clock. I reasoned that as it had taken me an hour to come down on a surface car, I should probably be twenty minutes late if I returned the same way. I might save twenty minutes by a subway express. But was there a station near? If not, I might lose more than twenty minutes in looking for one. Then I thought of the elevated, and I saw there was such a line within two blocks. But where was the station? If it were several blocks above or below the street I was on, I should lose time instead of gaining it. My mind went back to the subway express as quicker than the elevated; furthermore, I remembered that it went nearer than the elevated to the part of 124th Street I wished to reach, so that time would be saved at the end of the journey. I concluded in favor of the subway, and reached my destination by one o’clock.” (Dewey 1910: 68-69; 1933: 91-92)

Ferryboat : “Projecting nearly horizontally from the upper deck of the ferryboat on which I daily cross the river is a long white pole, having a gilded ball at its tip. It suggested a flagpole when I first saw it; its color, shape, and gilded ball agreed with this idea, and these reasons seemed to justify me in this belief. But soon difficulties presented themselves. The pole was nearly horizontal, an unusual position for a flagpole; in the next place, there was no pulley, ring, or cord by which to attach a flag; finally, there were elsewhere on the boat two vertical staffs from which flags were occasionally flown. It seemed probable that the pole was not there for flag-flying.

“I then tried to imagine all possible purposes of the pole, and to consider for which of these it was best suited: (a) Possibly it was an ornament. But as all the ferryboats and even the tugboats carried poles, this hypothesis was rejected. (b) Possibly it was the terminal of a wireless telegraph. But the same considerations made this improbable. Besides, the more natural place for such a terminal would be the highest part of the boat, on top of the pilot house. (c) Its purpose might be to point out the direction in which the boat is moving.

“In support of this conclusion, I discovered that the pole was lower than the pilot house, so that the steersman could easily see it. Moreover, the tip was enough higher than the base, so that, from the pilot's position, it must appear to project far out in front of the boat. Morevoer, the pilot being near the front of the boat, he would need some such guide as to its direction. Tugboats would also need poles for such a purpose. This hypothesis was so much more probable than the others that I accepted it. I formed the conclusion that the pole was set up for the purpose of showing the pilot the direction in which the boat pointed, to enable him to steer correctly.” (Dewey 1910: 69-70; 1933: 92-93)

Bubbles : “In washing tumblers in hot soapsuds and placing them mouth downward on a plate, bubbles appeared on the outside of the mouth of the tumblers and then went inside. Why? The presence of bubbles suggests air, which I note must come from inside the tumbler. I see that the soapy water on the plate prevents escape of the air save as it may be caught in bubbles. But why should air leave the tumbler? There was no substance entering to force it out. It must have expanded. It expands by increase of heat, or by decrease of pressure, or both. Could the air have become heated after the tumbler was taken from the hot suds? Clearly not the air that was already entangled in the water. If heated air was the cause, cold air must have entered in transferring the tumblers from the suds to the plate. I test to see if this supposition is true by taking several more tumblers out. Some I shake so as to make sure of entrapping cold air in them. Some I take out holding mouth downward in order to prevent cold air from entering. Bubbles appear on the outside of every one of the former and on none of the latter. I must be right in my inference. Air from the outside must have been expanded by the heat of the tumbler, which explains the appearance of the bubbles on the outside. But why do they then go inside? Cold contracts. The tumbler cooled and also the air inside it. Tension was removed, and hence bubbles appeared inside. To be sure of this, I test by placing a cup of ice on the tumbler while the bubbles are still forming outside. They soon reverse” (Dewey 1910: 70–71; 1933: 93–94).

Dewey (1910, 1933) sprinkles his book with other examples of critical thinking. We will refer to the following.

Weather : A man on a walk notices that it has suddenly become cool, thinks that it is probably going to rain, looks up and sees a dark cloud obscuring the sun, and quickens his steps (1910: 6–10; 1933: 9–13).

Disorder : A man finds his rooms on his return to them in disorder with his belongings thrown about, thinks at first of burglary as an explanation, then thinks of mischievous children as being an alternative explanation, then looks to see whether valuables are missing, and discovers that they are (1910: 82–83; 1933: 166–168).

Typhoid : A physician diagnosing a patient whose conspicuous symptoms suggest typhoid avoids drawing a conclusion until more data are gathered by questioning the patient and by making tests (1910: 85–86; 1933: 170).

Blur : A moving blur catches our eye in the distance, we ask ourselves whether it is a cloud of whirling dust or a tree moving its branches or a man signaling to us, we think of other traits that should be found on each of those possibilities, and we look and see if those traits are found (1910: 102, 108; 1933: 121, 133).

Suction pump : In thinking about the suction pump, the scientist first notes that it will draw water only to a maximum height of 33 feet at sea level and to a lesser maximum height at higher elevations, selects for attention the differing atmospheric pressure at these elevations, sets up experiments in which the air is removed from a vessel containing water (when suction no longer works) and in which the weight of air at various levels is calculated, compares the results of reasoning about the height to which a given weight of air will allow a suction pump to raise water with the observed maximum height at different elevations, and finally assimilates the suction pump to such apparently different phenomena as the siphon and the rising of a balloon (1910: 150–153; 1933: 195–198).

Diamond : A passenger in a car driving in a diamond lane reserved for vehicles with at least one passenger notices that the diamond marks on the pavement are far apart in some places and close together in others. Why? The driver suggests that the reason may be that the diamond marks are not needed where there is a solid double line separating the diamond line from the adjoining lane, but are needed when there is a dotted single line permitting crossing into the diamond lane. Further observation confirms that the diamonds are close together when a dotted line separates the diamond lane from its neighbour, but otherwise far apart.

Rash : A woman suddenly develops a very itchy red rash on her throat and upper chest. She recently noticed a mark on the back of her right hand, but was not sure whether the mark was a rash or a scrape. She lies down in bed and thinks about what might be causing the rash and what to do about it. About two weeks before, she began taking blood pressure medication that contained a sulfa drug, and the pharmacist had warned her, in view of a previous allergic reaction to a medication containing a sulfa drug, to be on the alert for an allergic reaction; however, she had been taking the medication for two weeks with no such effect. The day before, she began using a new cream on her neck and upper chest; against the new cream as the cause was mark on the back of her hand, which had not been exposed to the cream. She began taking probiotics about a month before. She also recently started new eye drops, but she supposed that manufacturers of eye drops would be careful not to include allergy-causing components in the medication. The rash might be a heat rash, since she recently was sweating profusely from her upper body. Since she is about to go away on a short vacation, where she would not have access to her usual physician, she decides to keep taking the probiotics and using the new eye drops but to discontinue the blood pressure medication and to switch back to the old cream for her neck and upper chest. She forms a plan to consult her regular physician on her return about the blood pressure medication.

Candidate : Although Dewey included no examples of thinking directed at appraising the arguments of others, such thinking has come to be considered a kind of critical thinking. We find an example of such thinking in the performance task on the Collegiate Learning Assessment (CLA+), which its sponsoring organization describes as

a performance-based assessment that provides a measure of an institution’s contribution to the development of critical-thinking and written communication skills of its students. (Council for Aid to Education 2017)

A sample task posted on its website requires the test-taker to write a report for public distribution evaluating a fictional candidate’s policy proposals and their supporting arguments, using supplied background documents, with a recommendation on whether to endorse the candidate.

Immediate acceptance of an idea that suggests itself as a solution to a problem (e.g., a possible explanation of an event or phenomenon, an action that seems likely to produce a desired result) is “uncritical thinking, the minimum of reflection” (Dewey 1910: 13). On-going suspension of judgment in the light of doubt about a possible solution is not critical thinking (Dewey 1910: 108). Critique driven by a dogmatically held political or religious ideology is not critical thinking; thus Paulo Freire (1968 [1970]) is using the term (e.g., at 1970: 71, 81, 100, 146) in a more politically freighted sense that includes not only reflection but also revolutionary action against oppression. Derivation of a conclusion from given data using an algorithm is not critical thinking.

What is critical thinking? There are many definitions. Ennis (2016) lists 14 philosophically oriented scholarly definitions and three dictionary definitions. Following Rawls (1971), who distinguished his conception of justice from a utilitarian conception but regarded them as rival conceptions of the same concept, Ennis maintains that the 17 definitions are different conceptions of the same concept. Rawls articulated the shared concept of justice as

a characteristic set of principles for assigning basic rights and duties and for determining… the proper distribution of the benefits and burdens of social cooperation. (Rawls 1971: 5)

Bailin et al. (1999b) claim that, if one considers what sorts of thinking an educator would take not to be critical thinking and what sorts to be critical thinking, one can conclude that educators typically understand critical thinking to have at least three features.

- It is done for the purpose of making up one’s mind about what to believe or do.

- The person engaging in the thinking is trying to fulfill standards of adequacy and accuracy appropriate to the thinking.

- The thinking fulfills the relevant standards to some threshold level.

One could sum up the core concept that involves these three features by saying that critical thinking is careful goal-directed thinking. This core concept seems to apply to all the examples of critical thinking described in the previous section. As for the non-examples, their exclusion depends on construing careful thinking as excluding jumping immediately to conclusions, suspending judgment no matter how strong the evidence, reasoning from an unquestioned ideological or religious perspective, and routinely using an algorithm to answer a question.

If the core of critical thinking is careful goal-directed thinking, conceptions of it can vary according to its presumed scope, its presumed goal, one’s criteria and threshold for being careful, and the thinking component on which one focuses As to its scope, some conceptions (e.g., Dewey 1910, 1933) restrict it to constructive thinking on the basis of one’s own observations and experiments, others (e.g., Ennis 1962; Fisher & Scriven 1997; Johnson 1992) to appraisal of the products of such thinking. Ennis (1991) and Bailin et al. (1999b) take it to cover both construction and appraisal. As to its goal, some conceptions restrict it to forming a judgment (Dewey 1910, 1933; Lipman 1987; Facione 1990a). Others allow for actions as well as beliefs as the end point of a process of critical thinking (Ennis 1991; Bailin et al. 1999b). As to the criteria and threshold for being careful, definitions vary in the term used to indicate that critical thinking satisfies certain norms: “intellectually disciplined” (Scriven & Paul 1987), “reasonable” (Ennis 1991), “skillful” (Lipman 1987), “skilled” (Fisher & Scriven 1997), “careful” (Bailin & Battersby 2009). Some definitions specify these norms, referring variously to “consideration of any belief or supposed form of knowledge in the light of the grounds that support it and the further conclusions to which it tends” (Dewey 1910, 1933); “the methods of logical inquiry and reasoning” (Glaser 1941); “conceptualizing, applying, analyzing, synthesizing, and/or evaluating information gathered from, or generated by, observation, experience, reflection, reasoning, or communication” (Scriven & Paul 1987); the requirement that “it is sensitive to context, relies on criteria, and is self-correcting” (Lipman 1987); “evidential, conceptual, methodological, criteriological, or contextual considerations” (Facione 1990a); and “plus-minus considerations of the product in terms of appropriate standards (or criteria)” (Johnson 1992). Stanovich and Stanovich (2010) propose to ground the concept of critical thinking in the concept of rationality, which they understand as combining epistemic rationality (fitting one’s beliefs to the world) and instrumental rationality (optimizing goal fulfillment); a critical thinker, in their view, is someone with “a propensity to override suboptimal responses from the autonomous mind” (2010: 227). These variant specifications of norms for critical thinking are not necessarily incompatible with one another, and in any case presuppose the core notion of thinking carefully. As to the thinking component singled out, some definitions focus on suspension of judgment during the thinking (Dewey 1910; McPeck 1981), others on inquiry while judgment is suspended (Bailin & Battersby 2009), others on the resulting judgment (Facione 1990a), and still others on the subsequent emotive response (Siegel 1988).

In educational contexts, a definition of critical thinking is a “programmatic definition” (Scheffler 1960: 19). It expresses a practical program for achieving an educational goal. For this purpose, a one-sentence formulaic definition is much less useful than articulation of a critical thinking process, with criteria and standards for the kinds of thinking that the process may involve. The real educational goal is recognition, adoption and implementation by students of those criteria and standards. That adoption and implementation in turn consists in acquiring the knowledge, abilities and dispositions of a critical thinker.

Conceptions of critical thinking generally do not include moral integrity as part of the concept. Dewey, for example, took critical thinking to be the ultimate intellectual goal of education, but distinguished it from the development of social cooperation among school children, which he took to be the central moral goal. Ennis (1996, 2011) added to his previous list of critical thinking dispositions a group of dispositions to care about the dignity and worth of every person, which he described as a “correlative” (1996) disposition without which critical thinking would be less valuable and perhaps harmful. An educational program that aimed at developing critical thinking but not the correlative disposition to care about the dignity and worth of every person, he asserted, “would be deficient and perhaps dangerous” (Ennis 1996: 172).

Dewey thought that education for reflective thinking would be of value to both the individual and society; recognition in educational practice of the kinship to the scientific attitude of children’s native curiosity, fertile imagination and love of experimental inquiry “would make for individual happiness and the reduction of social waste” (Dewey 1910: iii). Schools participating in the Eight-Year Study took development of the habit of reflective thinking and skill in solving problems as a means to leading young people to understand, appreciate and live the democratic way of life characteristic of the United States (Aikin 1942: 17–18, 81). Harvey Siegel (1988: 55–61) has offered four considerations in support of adopting critical thinking as an educational ideal. (1) Respect for persons requires that schools and teachers honour students’ demands for reasons and explanations, deal with students honestly, and recognize the need to confront students’ independent judgment; these requirements concern the manner in which teachers treat students. (2) Education has the task of preparing children to be successful adults, a task that requires development of their self-sufficiency. (3) Education should initiate children into the rational traditions in such fields as history, science and mathematics. (4) Education should prepare children to become democratic citizens, which requires reasoned procedures and critical talents and attitudes. To supplement these considerations, Siegel (1988: 62–90) responds to two objections: the ideology objection that adoption of any educational ideal requires a prior ideological commitment and the indoctrination objection that cultivation of critical thinking cannot escape being a form of indoctrination.

Despite the diversity of our 11 examples, one can recognize a common pattern. Dewey analyzed it as consisting of five phases:

- suggestions , in which the mind leaps forward to a possible solution;

- an intellectualization of the difficulty or perplexity into a problem to be solved, a question for which the answer must be sought;

- the use of one suggestion after another as a leading idea, or hypothesis , to initiate and guide observation and other operations in collection of factual material;

- the mental elaboration of the idea or supposition as an idea or supposition ( reasoning , in the sense on which reasoning is a part, not the whole, of inference); and

- testing the hypothesis by overt or imaginative action. (Dewey 1933: 106–107; italics in original)

The process of reflective thinking consisting of these phases would be preceded by a perplexed, troubled or confused situation and followed by a cleared-up, unified, resolved situation (Dewey 1933: 106). The term ‘phases’ replaced the term ‘steps’ (Dewey 1910: 72), thus removing the earlier suggestion of an invariant sequence. Variants of the above analysis appeared in (Dewey 1916: 177) and (Dewey 1938: 101–119).

The variant formulations indicate the difficulty of giving a single logical analysis of such a varied process. The process of critical thinking may have a spiral pattern, with the problem being redefined in the light of obstacles to solving it as originally formulated. For example, the person in Transit might have concluded that getting to the appointment at the scheduled time was impossible and have reformulated the problem as that of rescheduling the appointment for a mutually convenient time. Further, defining a problem does not always follow after or lead immediately to an idea of a suggested solution. Nor should it do so, as Dewey himself recognized in describing the physician in Typhoid as avoiding any strong preference for this or that conclusion before getting further information (Dewey 1910: 85; 1933: 170). People with a hypothesis in mind, even one to which they have a very weak commitment, have a so-called “confirmation bias” (Nickerson 1998): they are likely to pay attention to evidence that confirms the hypothesis and to ignore evidence that counts against it or for some competing hypothesis. Detectives, intelligence agencies, and investigators of airplane accidents are well advised to gather relevant evidence systematically and to postpone even tentative adoption of an explanatory hypothesis until the collected evidence rules out with the appropriate degree of certainty all but one explanation. Dewey’s analysis of the critical thinking process can be faulted as well for requiring acceptance or rejection of a possible solution to a defined problem, with no allowance for deciding in the light of the available evidence to suspend judgment. Further, given the great variety of kinds of problems for which reflection is appropriate, there is likely to be variation in its component events. Perhaps the best way to conceptualize the critical thinking process is as a checklist whose component events can occur in a variety of orders, selectively, and more than once. These component events might include (1) noticing a difficulty, (2) defining the problem, (3) dividing the problem into manageable sub-problems, (4) formulating a variety of possible solutions to the problem or sub-problem, (5) determining what evidence is relevant to deciding among possible solutions to the problem or sub-problem, (6) devising a plan of systematic observation or experiment that will uncover the relevant evidence, (7) carrying out the plan of systematic observation or experimentation, (8) noting the results of the systematic observation or experiment, (9) gathering relevant testimony and information from others, (10) judging the credibility of testimony and information gathered from others, (11) drawing conclusions from gathered evidence and accepted testimony, and (12) accepting a solution that the evidence adequately supports (cf. Hitchcock 2017: 485).

Checklist conceptions of the process of critical thinking are open to the objection that they are too mechanical and procedural to fit the multi-dimensional and emotionally charged issues for which critical thinking is urgently needed (Paul 1984). For such issues, a more dialectical process is advocated, in which competing relevant world views are identified, their implications explored, and some sort of creative synthesis attempted.

If one considers the critical thinking process illustrated by the 11 examples, one can identify distinct kinds of mental acts and mental states that form part of it. To distinguish, label and briefly characterize these components is a useful preliminary to identifying abilities, skills, dispositions, attitudes, habits and the like that contribute causally to thinking critically. Identifying such abilities and habits is in turn a useful preliminary to setting educational goals. Setting the goals is in its turn a useful preliminary to designing strategies for helping learners to achieve the goals and to designing ways of measuring the extent to which learners have done so. Such measures provide both feedback to learners on their achievement and a basis for experimental research on the effectiveness of various strategies for educating people to think critically. Let us begin, then, by distinguishing the kinds of mental acts and mental events that can occur in a critical thinking process.

- Observing : One notices something in one’s immediate environment (sudden cooling of temperature in Weather , bubbles forming outside a glass and then going inside in Bubbles , a moving blur in the distance in Blur , a rash in Rash ). Or one notes the results of an experiment or systematic observation (valuables missing in Disorder , no suction without air pressure in Suction pump )

- Feeling : One feels puzzled or uncertain about something (how to get to an appointment on time in Transit , why the diamonds vary in frequency in Diamond ). One wants to resolve this perplexity. One feels satisfaction once one has worked out an answer (to take the subway express in Transit , diamonds closer when needed as a warning in Diamond ).

- Wondering : One formulates a question to be addressed (why bubbles form outside a tumbler taken from hot water in Bubbles , how suction pumps work in Suction pump , what caused the rash in Rash ).

- Imagining : One thinks of possible answers (bus or subway or elevated in Transit , flagpole or ornament or wireless communication aid or direction indicator in Ferryboat , allergic reaction or heat rash in Rash ).

- Inferring : One works out what would be the case if a possible answer were assumed (valuables missing if there has been a burglary in Disorder , earlier start to the rash if it is an allergic reaction to a sulfa drug in Rash ). Or one draws a conclusion once sufficient relevant evidence is gathered (take the subway in Transit , burglary in Disorder , discontinue blood pressure medication and new cream in Rash ).

- Knowledge : One uses stored knowledge of the subject-matter to generate possible answers or to infer what would be expected on the assumption of a particular answer (knowledge of a city’s public transit system in Transit , of the requirements for a flagpole in Ferryboat , of Boyle’s law in Bubbles , of allergic reactions in Rash ).

- Experimenting : One designs and carries out an experiment or a systematic observation to find out whether the results deduced from a possible answer will occur (looking at the location of the flagpole in relation to the pilot’s position in Ferryboat , putting an ice cube on top of a tumbler taken from hot water in Bubbles , measuring the height to which a suction pump will draw water at different elevations in Suction pump , noticing the frequency of diamonds when movement to or from a diamond lane is allowed in Diamond ).

- Consulting : One finds a source of information, gets the information from the source, and makes a judgment on whether to accept it. None of our 11 examples include searching for sources of information. In this respect they are unrepresentative, since most people nowadays have almost instant access to information relevant to answering any question, including many of those illustrated by the examples. However, Candidate includes the activities of extracting information from sources and evaluating its credibility.

- Identifying and analyzing arguments : One notices an argument and works out its structure and content as a preliminary to evaluating its strength. This activity is central to Candidate . It is an important part of a critical thinking process in which one surveys arguments for various positions on an issue.

- Judging : One makes a judgment on the basis of accumulated evidence and reasoning, such as the judgment in Ferryboat that the purpose of the pole is to provide direction to the pilot.

- Deciding : One makes a decision on what to do or on what policy to adopt, as in the decision in Transit to take the subway.

By definition, a person who does something voluntarily is both willing and able to do that thing at that time. Both the willingness and the ability contribute causally to the person’s action, in the sense that the voluntary action would not occur if either (or both) of these were lacking. For example, suppose that one is standing with one’s arms at one’s sides and one voluntarily lifts one’s right arm to an extended horizontal position. One would not do so if one were unable to lift one’s arm, if for example one’s right side was paralyzed as the result of a stroke. Nor would one do so if one were unwilling to lift one’s arm, if for example one were participating in a street demonstration at which a white supremacist was urging the crowd to lift their right arm in a Nazi salute and one were unwilling to express support in this way for the racist Nazi ideology. The same analysis applies to a voluntary mental process of thinking critically. It requires both willingness and ability to think critically, including willingness and ability to perform each of the mental acts that compose the process and to coordinate those acts in a sequence that is directed at resolving the initiating perplexity.

Consider willingness first. We can identify causal contributors to willingness to think critically by considering factors that would cause a person who was able to think critically about an issue nevertheless not to do so (Hamby 2014). For each factor, the opposite condition thus contributes causally to willingness to think critically on a particular occasion. For example, people who habitually jump to conclusions without considering alternatives will not think critically about issues that arise, even if they have the required abilities. The contrary condition of willingness to suspend judgment is thus a causal contributor to thinking critically.

Now consider ability. In contrast to the ability to move one’s arm, which can be completely absent because a stroke has left the arm paralyzed, the ability to think critically is a developed ability, whose absence is not a complete absence of ability to think but absence of ability to think well. We can identify the ability to think well directly, in terms of the norms and standards for good thinking. In general, to be able do well the thinking activities that can be components of a critical thinking process, one needs to know the concepts and principles that characterize their good performance, to recognize in particular cases that the concepts and principles apply, and to apply them. The knowledge, recognition and application may be procedural rather than declarative. It may be domain-specific rather than widely applicable, and in either case may need subject-matter knowledge, sometimes of a deep kind.

Reflections of the sort illustrated by the previous two paragraphs have led scholars to identify the knowledge, abilities and dispositions of a “critical thinker”, i.e., someone who thinks critically whenever it is appropriate to do so. We turn now to these three types of causal contributors to thinking critically. We start with dispositions, since arguably these are the most powerful contributors to being a critical thinker, can be fostered at an early stage of a child’s development, and are susceptible to general improvement (Glaser 1941: 175)

8. Critical Thinking Dispositions

Educational researchers use the term ‘dispositions’ broadly for the habits of mind and attitudes that contribute causally to being a critical thinker. Some writers (e.g., Paul & Elder 2006; Hamby 2014; Bailin & Battersby 2016) propose to use the term ‘virtues’ for this dimension of a critical thinker. The virtues in question, although they are virtues of character, concern the person’s ways of thinking rather than the person’s ways of behaving towards others. They are not moral virtues but intellectual virtues, of the sort articulated by Zagzebski (1996) and discussed by Turri, Alfano, and Greco (2017).